Deep learning论文翻译

- 格式:docx

- 大小:651.92 KB

- 文档页数:14

ai这么发达,还要不要学习英语作文全文共3篇示例,供读者参考篇1With the rapid development of artificial intelligence (AI) technology, many people have started to question the importance of learning English. Some argue that with AI's ability to translate languages and perform various tasks, there is no longer a need for individuals to learn English. However, despite the advancements in AI, there are still several reasons why learning English is essential.First and foremost, English is the most commonly used language in the world. It is the official language of 53 countries and is spoken by over 1.5 billion people worldwide. This means that knowing English can open up a world of opportunities for individuals, both in terms of career prospects and personal growth. Many multinational companies require employees to be proficient in English, as it allows for effective communication with colleagues and clients from different countries.Furthermore, English is the language of the internet and technology. Most of the information available online is in English,and a large majority of software programs and applications are also developed in English. Therefore, having a good grasp of the English language is essential for navigating the digital world and staying up-to-date with the latest technological advancements.Moreover, learning English can enhance cognitive abilities and improve overall brain function. Studies have shown that bilingual individuals tend to have better problem-solving skills, creativity, and multitasking abilities. By learning English, individuals can train their brains to think in different ways and improve their cognitive flexibility.In addition, English is the language of academia and research. Many of the world's top universities and research institutions conduct their studies and publish their findings in English. Therefore, if individuals want to pursue higher education or engage in academic discussions and debates, having a strong command of the English language is crucial.Another important reason to continue learning English is the cultural enrichment it provides. The English language is rich in literature, music, film, and art, all of which can offer valuable insights into different cultures and perspectives. By learning English, individuals can access a wealth of cultural resources and broaden their understanding of the world.In conclusion, while AI technology continues to advance and offer new capabilities, the importance of learning English remains undeniable. English is a global language that can open doors to a wide range of opportunities, both professionally and personally. By continuing to improve their English skills, individuals can stay competitive in today's globalized world and enrich their lives in numerous ways. So, despite the prevalence of AI, learning English is still a valuable and worthwhile endeavor.篇2In this era of rapid advancement in artificial intelligence (AI), there is a growing debate on whether learning English is still necessary. With the development of AI technology, some people believe that translation tools and artificial intelligence devices can eliminate the need for learning a second language like English. However, there are still compelling reasons to continue learning English despite the progress of AI.First and foremost, English remains the dominant language in the fields of business, technology, science, and academia. In order to succeed in these industries, it is essential to have a good command of English. While AI can assist in translating languages, it does not have the same level of understanding, nuance, and cultural context that human language speakers possess.Therefore, learning English can still provide a competitive advantage in the global marketplace.Moreover, learning a second language like English has cognitive benefits that cannot be replaced by AI. Studies have shown that bilingual individuals have better problem-solving skills, multitasking abilities, and memory retention. By learning English, individuals can improve their cognitive functioning and enhance their overall brain health.Additionally, English is not just a means of communication, but also a gateway to accessing a wealth of knowledge and information. The majority of academic research, literature, and online resources are available in English. By mastering English, individuals can broaden their horizons, gain new perspectives, and stay informed about the latest developments in their fields of interest.Furthermore, learning English can also enrich one's personal and social life. It allows individuals to communicate with people from different countries and cultures, fostering cross-cultural understanding and building relationships with people from diverse backgrounds. In an increasingly interconnected world, proficiency in English can facilitate travel, work, and social interactions across borders.In conclusion, while AI technology continues to advance and offer new tools for language translation and communication, learning English remains a valuable and essential skill. The benefits of mastering English go beyond mere communication and can enhance one's professional, cognitive, and personal development. As we navigate the complexities of a globalized world, the ability to speak and understand English will continue to be a valuable asset that AI cannot fully replicate. Therefore, investing time and effort in learning English is still worthwhile and important in the age of AI.篇3With the rapid development of artificial intelligence (AI) technology, some people may wonder if there is still a need to learn English. In my opinion, despite the advancements in AI, learning English is still crucial for several reasons.Firstly, English remains the dominant language in the fields of business, science, technology, and international communication. While AI can assist with translation and language learning, it is still necessary to have a solid foundation in English to effectively communicate and collaborate with people from around the world. Understanding English opens upa wealth of opportunities for professional growth and personal development.Secondly, learning English enhances cognitive abilities and provides numerous mental benefits. Research has shown that bilingual individuals have better problem-solving skills, enhanced memory, and improved multitasking abilities. By learning English, individuals can sharpen their minds and improve their cognitive functions, leading to overall mental well-being.Furthermore, English is the language of the internet and digital communication. With the rise of social media, online forums, and digital platforms, English has become the primary language of online interaction. By mastering English, individuals can access a vast amount of information, connect with people globally, and stay updated on the latest trends and developments in various fields.Moreover, English proficiency is essential for academic and career advancement. Many universities worldwide offer programs and courses taught in English, and proficiency in the language is often a requirement for admission. Additionally, many multinational companies prefer candidates with strongEnglish skills, as it enables them to work with colleagues, clients, and partners from different countries.In conclusion, while AI technology continues to advance and offer innovative solutions for language learning and communication, the importance of learning English cannot be underestimated. English proficiency opens doors to countless opportunities, enhances cognitive abilities, and enables individuals to thrive in an increasingly interconnected and globalized world. Therefore, it is essential to continue learning and improving English skills, even in the age of AI.。

论文翻译成英文The Translation of the PaperWith the development of the world and rapid advancement of science and technology, the importance of communication among different countries has become increasingly prominent. This has led to a surge in demand for translation services, making translation an essential and indispensable profession.Translation is the act of conveying the meaning of a text from one language to another. It involves not only the transfer of the words, but also the ability to accurately capture the cultural nuances and context of the original text. A skilled translator must have a deep understanding of both the source and target languages, as well as a broad knowledge of various subject areas.The translation process can be divided into several stages. First, the translator needs to carefully read and comprehend the source text to fully grasp its meaning. Then, they must accurately transfer the message, while considering the characteristics and conventions of the target language. Next, the translator needs to revise and edit the translation to ensure accuracy and clarity. Finally, the completed translation should undergo rigorous proofreading to eliminate any errors or omissions.Translation plays a crucial role in numerous fields. In the business world, accurate translation of documents such as contracts, proposals, and marketing materials is essential for successful international transactions and collaborations. In the academic field, translation allows for the exchange and dissemination ofknowledge among researchers from different countries, enabling cross-cultural understanding and cooperation. In the legal field, translation of legal documents ensures that individuals from different linguistic backgrounds have equal access to justice. In the entertainment industry, translation allows for the enjoyment of foreign films, TV shows, and literature.However, translation is not as simple as replacing words from one language with their equivalents in another. Translators often face linguistic and cultural challenges that require them to make difficult decisions. They need to find equivalent expressions, idiomatic phrases, and appropriate terminology that reflect the intended meaning and cultural connotations of the original text. Additionally, the translator needs to be aware of the target audience and adapt the translation accordingly, ensuring that it is easily understood and culturally appropriate.Advancements in technology have greatly impacted the translation profession. Computer-assisted translation tools, such as translation memory software, have made the translation process more efficient and consistent. Machine translation, although still in its early stages, has shown potential for aiding human translators by providing initial drafts that can be refined. However, human translators are still irreplaceable due to their ability to understand the subtleties of language and capture the essence of a text.In conclusion, translation is a complex and multifaceted profession that plays a crucial role in facilitating communication and understanding among different cultures. It requires not only linguistic proficiency, but also cultural awareness and subjectknowledge. As the world continues to grow more interconnected, the demand for skilled translators will only continue to rise, making translation an essential skill in an increasingly globalized world.。

卷积神经网络机器学习相关外文翻译中英文2020英文Prediction of composite microstructure stress-strain curves usingconvolutional neural networksCharles Yang,Youngsoo Kim,Seunghwa Ryu,Grace GuAbstractStress-strain curves are an important representation of a material's mechanical properties, from which important properties such as elastic modulus, strength, and toughness, are defined. However, generating stress-strain curves from numerical methods such as finite element method (FEM) is computationally intensive, especially when considering the entire failure path for a material. As a result, it is difficult to perform high throughput computational design of materials with large design spaces, especially when considering mechanical responses beyond the elastic limit. In this work, a combination of principal component analysis (PCA) and convolutional neural networks (CNN) are used to predict the entire stress-strain behavior of binary composites evaluated over the entire failure path, motivated by the significantly faster inference speed of empirical models. We show that PCA transforms the stress-strain curves into an effective latent space by visualizing the eigenbasis of PCA. Despite having a dataset of only 10-27% of possible microstructure configurations, the mean absolute error of the prediction is <10% of therange of values in the dataset, when measuring model performance based on derived material descriptors, such as modulus, strength, and toughness. Our study demonstrates the potential to use machine learning to accelerate material design, characterization, and optimization.Keywords:Machine learning,Convolutional neural networks,Mechanical properties,Microstructure,Computational mechanics IntroductionUnderstanding the relationship between structure and property for materials is a seminal problem in material science, with significant applications for designing next-generation materials. A primary motivating example is designing composite microstructures for load-bearing applications, as composites offer advantageously high specific strength and specific toughness. Recent advancements in additive manufacturing have facilitated the fabrication of complex composite structures, and as a result, a variety of complex designs have been fabricated and tested via 3D-printing methods. While more advanced manufacturing techniques are opening up unprecedented opportunities for advanced materials and novel functionalities, identifying microstructures with desirable properties is a difficult optimization problem.One method of identifying optimal composite designs is by constructing analytical theories. For conventional particulate/fiber-reinforced composites, a variety of homogenizationtheories have been developed to predict the mechanical properties of composites as a function of volume fraction, aspect ratio, and orientation distribution of reinforcements. Because many natural composites, synthesized via self-assembly processes, have relatively periodic and regular structures, their mechanical properties can be predicted if the load transfer mechanism of a representative unit cell and the role of the self-similar hierarchical structure are understood. However, the applicability of analytical theories is limited in quantitatively predicting composite properties beyond the elastic limit in the presence of defects, because such theories rely on the concept of representative volume element (RVE), a statistical representation of material properties, whereas the strength and failure is determined by the weakest defect in the entire sample domain. Numerical modeling based on finite element methods (FEM) can complement analytical methods for predicting inelastic properties such as strength and toughness modulus (referred to as toughness, hereafter) which can only be obtained from full stress-strain curves.However, numerical schemes capable of modeling the initiation and propagation of the curvilinear cracks, such as the crack phase field model, are computationally expensive and time-consuming because a very fine mesh is required to accommodate highly concentrated stress field near crack tip and the rapid variation of damage parameter near diffusive cracksurface. Meanwhile, analytical models require significant human effort and domain expertise and fail to generalize to similar domain problems.In order to identify high-performing composites in the midst of large design spaces within realistic time-frames, we need models that can rapidly describe the mechanical properties of complex systems and be generalized easily to analogous systems. Machine learning offers the benefit of extremely fast inference times and requires only training data to learn relationships between inputs and outputs e.g., composite microstructures and their mechanical properties. Machine learning has already been applied to speed up the optimization of several different physical systems, including graphene kirigami cuts, fine-tuning spin qubit parameters, and probe microscopy tuning. Such models do not require significant human intervention or knowledge, learn relationships efficiently relative to the input design space, and can be generalized to different systems.In this paper, we utilize a combination of principal component analysis (PCA) and convolutional neural networks (CNN) to predict the entire stress-strain c urve of composite failures beyond the elastic limit. Stress-strain curves are chosen as the model's target because t hey are difficult to predict given their high dimensionality. In addition, stress-strain curves are used to derive important material descriptors such as modulus, strength, and toughness. In this sense, predicting stress-straincurves is a more general description of composites properties than any combination of scaler material descriptors. A dataset of 100,000 different composite microstructures and their corresponding stress-strain curves are used to train and evaluate model performance. Due to the high dimensionality of the stress-strain dataset, several dimensionality reduction methods are used, including PCA, featuring a blend of domain understanding and traditional machine learning, to simplify the problem without loss of generality for the model.We will first describe our modeling methodology and the parameters of our finite-element method (FEM) used to generate data. Visualizations of the learned PCA latent space are then presented, a long with model performance results.CNN implementation and trainingA convolutional neural network was trained to predict this lower dimensional representation of the stress vector. The input to the CNN was a binary matrix representing the composite design, with 0's corresponding to soft blocks and 1's corresponding to stiff blocks. PCA was implemented with the open-source Python package scikit-learn, using the default hyperparameters. CNN was implemented using Keras with a TensorFlow backend. The batch size for all experiments was set to 16 and the number of epochs to 30; the Adam optimizer was used to update the CNN weights during backpropagation.A train/test split ratio of 95:5 is used –we justify using a smaller ratio than the standard 80:20 because of a relatively large dataset. With a ratio of 95:5 and a dataset with 100,000 instances, the test set size still has enough data points, roughly several thousands, for its results to generalize. Each column of the target PCA-representation was normalized to have a mean of 0 and a standard deviation of 1 to prevent instable training.Finite element method data generationFEM was used to generate training data for the CNN model. Although initially obtained training data is compute-intensive, it takes much less time to train the CNN model and even less time to make high-throughput inferences over thousands of new, randomly generated composites. The crack phase field solver was based on the hybrid formulation for the quasi-static fracture of elastic solids and implementedin the commercial FEM software ABAQUS with a user-element subroutine (UEL).Visualizing PCAIn order to better understand the role PCA plays in effectively capturing the information contained in stress-strain curves, the principal component representation of stress-strain curves is plotted in 3 dimensions. Specifically, we take the first three principal components, which have a cumulative explained variance ~85%, and plot stress-strain curves in that basis and provide several different angles from which toview the 3D plot. Each point represents a stress-strain curve in the PCA latent space and is colored based on the associated modulus value. it seems that the PCA is able to spread out the curves in the latent space based on modulus values, which suggests that this is a useful latent space for CNN to make predictions in.CNN model design and performanceOur CNN was a fully convolutional neural network i.e. the only dense layer was the output layer. All convolution layers used 16 filters with a stride of 1, with a LeakyReLU activation followed by BatchNormalization. The first 3 Conv blocks did not have 2D MaxPooling, followed by 9 conv blocks which did have a 2D MaxPooling layer, placed after the BatchNormalization layer. A GlobalAveragePooling was used to reduce the dimensionality of the output tensor from the sequential convolution blocks and the final output layer was a Dense layer with 15 nodes, where each node corresponded to a principal component. In total, our model had 26,319 trainable weights.Our architecture was motivated by the recent development and convergence onto fully-convolutional architectures for traditional computer vision applications, where convolutions are empirically observed to be more efficient and stable for learning as opposed to dense layers. In addition, in our previous work, we had shown that CNN's werea capable architecture for learning to predict mechanical properties of 2Dcomposites [30]. The convolution operation is an intuitively good fit forpredicting crack propagation because it is a local operation, allowing it toimplicitly featurize and learn the local spatial effects of crack propagation.After applying PCA transformation to reduce the dimensionality ofthe target variable, CNN is used to predict the PCA representation of thestress-strain curve of a given binary composite design. After training theCNN on a training set, its ability to generalize to composite designs it hasnot seen is evaluated by comparing its predictions on an unseen test set.However, a natural question that emerges i s how to evaluate a model's performance at predicting stress-strain curves in a real-world engineeringcontext. While simple scaler metrics such as mean squared error (MSE)and mean absolute error (MAE) generalize easily to vector targets, it isnot clear how to interpret these aggregate summaries of performance. It isdifficult to use such metrics to ask questions such as “Is this modeand “On average, how poorly will aenough to use in the real world” given prediction be incorrect relative to some given specification”. Although being able to predict stress-strain curves is an importantapplication of FEM and a highly desirable property for any machinelearning model to learn, it does not easily lend itself to interpretation. Specifically, there is no simple quantitative way to define whether two-world units.stress-s train curves are “close” or “similar” with real Given that stress-strain curves are oftentimes intermediary representations of a composite property that are used to derive more meaningful descriptors such as modulus, strength, and toughness, we decided to evaluate the model in an analogous fashion. The CNN prediction in the PCA latent space representation is transformed back to a stress-strain curve using PCA, and used to derive the predicted modulus, strength, and toughness of the composite. The predicted material descriptors are then compared with the actual material descriptors. In this way, MSE and MAE now have clearly interpretable units and meanings. The average performance of the model with respect to the error between the actual and predicted material descriptor values derived from stress-strain curves are presented in Table. The MAE for material descriptors provides an easily interpretable metric of model performance and can easily be used in any design specification to provide confidence estimates of a model prediction. When comparing the mean absolute error (MAE) to the range of values taken on by the distribution of material descriptors, we can see that the MAE is relatively small compared to the range. The MAE compared to the range is <10% for all material descriptors. Relatively tight confidence intervals on the error indicate that this model architecture is stable, the model performance is not heavily dependent on initialization, and that our results are robust to differenttrain-test splits of the data.Future workFuture work includes combining empirical models with optimization algorithms, such as gradient-based methods, to identify composite designs that yield complementary mechanical properties. The ability of a trained empirical model to make high-throughput predictions over designs it has never seen before allows for large parameter space optimization that would be computationally infeasible for FEM. In addition, we plan to explore different visualizations of empirical models-box” of such models. Applying machine in an effort to “open up the blacklearning to finite-element methods is a rapidly growing field with the potential to discover novel next-generation materials tailored for a variety of applications. We also note that the proposed method can be readily applied to predict other physical properties represented in a similar vectorized format, such as electron/phonon density of states, and sound/light absorption spectrum.ConclusionIn conclusion, we applied PCA and CNN to rapidly and accurately predict the stress-strain curves of composites beyond the elastic limit. In doing so, several novel methodological approaches were developed, including using the derived material descriptors from the stress-strain curves as interpretable metrics for model performance and dimensionalityreduction techniques to stress-strain curves. This method has the potential to enable composite design with respect to mechanical response beyond the elastic limit, which was previously computationally infeasible, and can generalize easily to related problems outside of microstructural design for enhancing mechanical properties.中文基于卷积神经网络的复合材料微结构应力-应变曲线预测查尔斯,吉姆,瑞恩,格瑞斯摘要应力-应变曲线是材料机械性能的重要代表,从中可以定义重要的性能,例如弹性模量,强度和韧性。

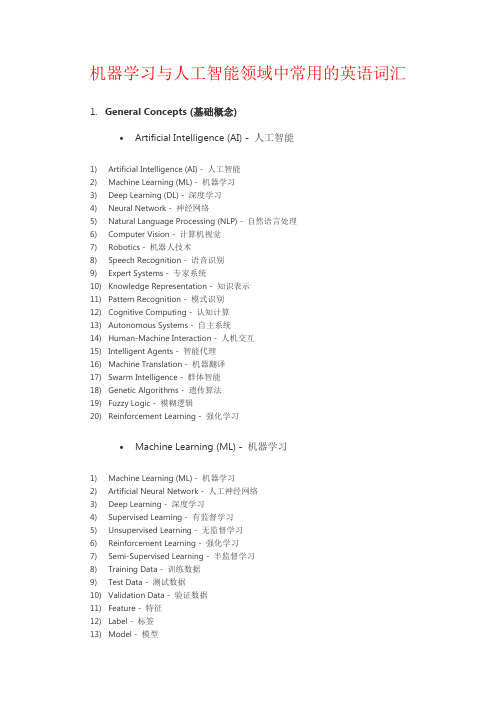

机器学习与人工智能领域中常用的英语词汇1.General Concepts (基础概念)•Artificial Intelligence (AI) - 人工智能1)Artificial Intelligence (AI) - 人工智能2)Machine Learning (ML) - 机器学习3)Deep Learning (DL) - 深度学习4)Neural Network - 神经网络5)Natural Language Processing (NLP) - 自然语言处理6)Computer Vision - 计算机视觉7)Robotics - 机器人技术8)Speech Recognition - 语音识别9)Expert Systems - 专家系统10)Knowledge Representation - 知识表示11)Pattern Recognition - 模式识别12)Cognitive Computing - 认知计算13)Autonomous Systems - 自主系统14)Human-Machine Interaction - 人机交互15)Intelligent Agents - 智能代理16)Machine Translation - 机器翻译17)Swarm Intelligence - 群体智能18)Genetic Algorithms - 遗传算法19)Fuzzy Logic - 模糊逻辑20)Reinforcement Learning - 强化学习•Machine Learning (ML) - 机器学习1)Machine Learning (ML) - 机器学习2)Artificial Neural Network - 人工神经网络3)Deep Learning - 深度学习4)Supervised Learning - 有监督学习5)Unsupervised Learning - 无监督学习6)Reinforcement Learning - 强化学习7)Semi-Supervised Learning - 半监督学习8)Training Data - 训练数据9)Test Data - 测试数据10)Validation Data - 验证数据11)Feature - 特征12)Label - 标签13)Model - 模型14)Algorithm - 算法15)Regression - 回归16)Classification - 分类17)Clustering - 聚类18)Dimensionality Reduction - 降维19)Overfitting - 过拟合20)Underfitting - 欠拟合•Deep Learning (DL) - 深度学习1)Deep Learning - 深度学习2)Neural Network - 神经网络3)Artificial Neural Network (ANN) - 人工神经网络4)Convolutional Neural Network (CNN) - 卷积神经网络5)Recurrent Neural Network (RNN) - 循环神经网络6)Long Short-Term Memory (LSTM) - 长短期记忆网络7)Gated Recurrent Unit (GRU) - 门控循环单元8)Autoencoder - 自编码器9)Generative Adversarial Network (GAN) - 生成对抗网络10)Transfer Learning - 迁移学习11)Pre-trained Model - 预训练模型12)Fine-tuning - 微调13)Feature Extraction - 特征提取14)Activation Function - 激活函数15)Loss Function - 损失函数16)Gradient Descent - 梯度下降17)Backpropagation - 反向传播18)Epoch - 训练周期19)Batch Size - 批量大小20)Dropout - 丢弃法•Neural Network - 神经网络1)Neural Network - 神经网络2)Artificial Neural Network (ANN) - 人工神经网络3)Deep Neural Network (DNN) - 深度神经网络4)Convolutional Neural Network (CNN) - 卷积神经网络5)Recurrent Neural Network (RNN) - 循环神经网络6)Long Short-Term Memory (LSTM) - 长短期记忆网络7)Gated Recurrent Unit (GRU) - 门控循环单元8)Feedforward Neural Network - 前馈神经网络9)Multi-layer Perceptron (MLP) - 多层感知器10)Radial Basis Function Network (RBFN) - 径向基函数网络11)Hopfield Network - 霍普菲尔德网络12)Boltzmann Machine - 玻尔兹曼机13)Autoencoder - 自编码器14)Spiking Neural Network (SNN) - 脉冲神经网络15)Self-organizing Map (SOM) - 自组织映射16)Restricted Boltzmann Machine (RBM) - 受限玻尔兹曼机17)Hebbian Learning - 海比安学习18)Competitive Learning - 竞争学习19)Neuroevolutionary - 神经进化20)Neuron - 神经元•Algorithm - 算法1)Algorithm - 算法2)Supervised Learning Algorithm - 有监督学习算法3)Unsupervised Learning Algorithm - 无监督学习算法4)Reinforcement Learning Algorithm - 强化学习算法5)Classification Algorithm - 分类算法6)Regression Algorithm - 回归算法7)Clustering Algorithm - 聚类算法8)Dimensionality Reduction Algorithm - 降维算法9)Decision Tree Algorithm - 决策树算法10)Random Forest Algorithm - 随机森林算法11)Support Vector Machine (SVM) Algorithm - 支持向量机算法12)K-Nearest Neighbors (KNN) Algorithm - K近邻算法13)Naive Bayes Algorithm - 朴素贝叶斯算法14)Gradient Descent Algorithm - 梯度下降算法15)Genetic Algorithm - 遗传算法16)Neural Network Algorithm - 神经网络算法17)Deep Learning Algorithm - 深度学习算法18)Ensemble Learning Algorithm - 集成学习算法19)Reinforcement Learning Algorithm - 强化学习算法20)Metaheuristic Algorithm - 元启发式算法•Model - 模型1)Model - 模型2)Machine Learning Model - 机器学习模型3)Artificial Intelligence Model - 人工智能模型4)Predictive Model - 预测模型5)Classification Model - 分类模型6)Regression Model - 回归模型7)Generative Model - 生成模型8)Discriminative Model - 判别模型9)Probabilistic Model - 概率模型10)Statistical Model - 统计模型11)Neural Network Model - 神经网络模型12)Deep Learning Model - 深度学习模型13)Ensemble Model - 集成模型14)Reinforcement Learning Model - 强化学习模型15)Support Vector Machine (SVM) Model - 支持向量机模型16)Decision Tree Model - 决策树模型17)Random Forest Model - 随机森林模型18)Naive Bayes Model - 朴素贝叶斯模型19)Autoencoder Model - 自编码器模型20)Convolutional Neural Network (CNN) Model - 卷积神经网络模型•Dataset - 数据集1)Dataset - 数据集2)Training Dataset - 训练数据集3)Test Dataset - 测试数据集4)Validation Dataset - 验证数据集5)Balanced Dataset - 平衡数据集6)Imbalanced Dataset - 不平衡数据集7)Synthetic Dataset - 合成数据集8)Benchmark Dataset - 基准数据集9)Open Dataset - 开放数据集10)Labeled Dataset - 标记数据集11)Unlabeled Dataset - 未标记数据集12)Semi-Supervised Dataset - 半监督数据集13)Multiclass Dataset - 多分类数据集14)Feature Set - 特征集15)Data Augmentation - 数据增强16)Data Preprocessing - 数据预处理17)Missing Data - 缺失数据18)Outlier Detection - 异常值检测19)Data Imputation - 数据插补20)Metadata - 元数据•Training - 训练1)Training - 训练2)Training Data - 训练数据3)Training Phase - 训练阶段4)Training Set - 训练集5)Training Examples - 训练样本6)Training Instance - 训练实例7)Training Algorithm - 训练算法8)Training Model - 训练模型9)Training Process - 训练过程10)Training Loss - 训练损失11)Training Epoch - 训练周期12)Training Batch - 训练批次13)Online Training - 在线训练14)Offline Training - 离线训练15)Continuous Training - 连续训练16)Transfer Learning - 迁移学习17)Fine-Tuning - 微调18)Curriculum Learning - 课程学习19)Self-Supervised Learning - 自监督学习20)Active Learning - 主动学习•Testing - 测试1)Testing - 测试2)Test Data - 测试数据3)Test Set - 测试集4)Test Examples - 测试样本5)Test Instance - 测试实例6)Test Phase - 测试阶段7)Test Accuracy - 测试准确率8)Test Loss - 测试损失9)Test Error - 测试错误10)Test Metrics - 测试指标11)Test Suite - 测试套件12)Test Case - 测试用例13)Test Coverage - 测试覆盖率14)Cross-Validation - 交叉验证15)Holdout Validation - 留出验证16)K-Fold Cross-Validation - K折交叉验证17)Stratified Cross-Validation - 分层交叉验证18)Test Driven Development (TDD) - 测试驱动开发19)A/B Testing - A/B 测试20)Model Evaluation - 模型评估•Validation - 验证1)Validation - 验证2)Validation Data - 验证数据3)Validation Set - 验证集4)Validation Examples - 验证样本5)Validation Instance - 验证实例6)Validation Phase - 验证阶段7)Validation Accuracy - 验证准确率8)Validation Loss - 验证损失9)Validation Error - 验证错误10)Validation Metrics - 验证指标11)Cross-Validation - 交叉验证12)Holdout Validation - 留出验证13)K-Fold Cross-Validation - K折交叉验证14)Stratified Cross-Validation - 分层交叉验证15)Leave-One-Out Cross-Validation - 留一法交叉验证16)Validation Curve - 验证曲线17)Hyperparameter Validation - 超参数验证18)Model Validation - 模型验证19)Early Stopping - 提前停止20)Validation Strategy - 验证策略•Supervised Learning - 有监督学习1)Supervised Learning - 有监督学习2)Label - 标签3)Feature - 特征4)Target - 目标5)Training Labels - 训练标签6)Training Features - 训练特征7)Training Targets - 训练目标8)Training Examples - 训练样本9)Training Instance - 训练实例10)Regression - 回归11)Classification - 分类12)Predictor - 预测器13)Regression Model - 回归模型14)Classifier - 分类器15)Decision Tree - 决策树16)Support Vector Machine (SVM) - 支持向量机17)Neural Network - 神经网络18)Feature Engineering - 特征工程19)Model Evaluation - 模型评估20)Overfitting - 过拟合21)Underfitting - 欠拟合22)Bias-Variance Tradeoff - 偏差-方差权衡•Unsupervised Learning - 无监督学习1)Unsupervised Learning - 无监督学习2)Clustering - 聚类3)Dimensionality Reduction - 降维4)Anomaly Detection - 异常检测5)Association Rule Learning - 关联规则学习6)Feature Extraction - 特征提取7)Feature Selection - 特征选择8)K-Means - K均值9)Hierarchical Clustering - 层次聚类10)Density-Based Clustering - 基于密度的聚类11)Principal Component Analysis (PCA) - 主成分分析12)Independent Component Analysis (ICA) - 独立成分分析13)T-distributed Stochastic Neighbor Embedding (t-SNE) - t分布随机邻居嵌入14)Gaussian Mixture Model (GMM) - 高斯混合模型15)Self-Organizing Maps (SOM) - 自组织映射16)Autoencoder - 自动编码器17)Latent Variable - 潜变量18)Data Preprocessing - 数据预处理19)Outlier Detection - 异常值检测20)Clustering Algorithm - 聚类算法•Reinforcement Learning - 强化学习1)Reinforcement Learning - 强化学习2)Agent - 代理3)Environment - 环境4)State - 状态5)Action - 动作6)Reward - 奖励7)Policy - 策略8)Value Function - 值函数9)Q-Learning - Q学习10)Deep Q-Network (DQN) - 深度Q网络11)Policy Gradient - 策略梯度12)Actor-Critic - 演员-评论家13)Exploration - 探索14)Exploitation - 开发15)Temporal Difference (TD) - 时间差分16)Markov Decision Process (MDP) - 马尔可夫决策过程17)State-Action-Reward-State-Action (SARSA) - 状态-动作-奖励-状态-动作18)Policy Iteration - 策略迭代19)Value Iteration - 值迭代20)Monte Carlo Methods - 蒙特卡洛方法•Semi-Supervised Learning - 半监督学习1)Semi-Supervised Learning - 半监督学习2)Labeled Data - 有标签数据3)Unlabeled Data - 无标签数据4)Label Propagation - 标签传播5)Self-Training - 自训练6)Co-Training - 协同训练7)Transudative Learning - 传导学习8)Inductive Learning - 归纳学习9)Manifold Regularization - 流形正则化10)Graph-based Methods - 基于图的方法11)Cluster Assumption - 聚类假设12)Low-Density Separation - 低密度分离13)Semi-Supervised Support Vector Machines (S3VM) - 半监督支持向量机14)Expectation-Maximization (EM) - 期望最大化15)Co-EM - 协同期望最大化16)Entropy-Regularized EM - 熵正则化EM17)Mean Teacher - 平均教师18)Virtual Adversarial Training - 虚拟对抗训练19)Tri-training - 三重训练20)Mix Match - 混合匹配•Feature - 特征1)Feature - 特征2)Feature Engineering - 特征工程3)Feature Extraction - 特征提取4)Feature Selection - 特征选择5)Input Features - 输入特征6)Output Features - 输出特征7)Feature Vector - 特征向量8)Feature Space - 特征空间9)Feature Representation - 特征表示10)Feature Transformation - 特征转换11)Feature Importance - 特征重要性12)Feature Scaling - 特征缩放13)Feature Normalization - 特征归一化14)Feature Encoding - 特征编码15)Feature Fusion - 特征融合16)Feature Dimensionality Reduction - 特征维度减少17)Continuous Feature - 连续特征18)Categorical Feature - 分类特征19)Nominal Feature - 名义特征20)Ordinal Feature - 有序特征•Label - 标签1)Label - 标签2)Labeling - 标注3)Ground Truth - 地面真值4)Class Label - 类别标签5)Target Variable - 目标变量6)Labeling Scheme - 标注方案7)Multi-class Labeling - 多类别标注8)Binary Labeling - 二分类标注9)Label Noise - 标签噪声10)Labeling Error - 标注错误11)Label Propagation - 标签传播12)Unlabeled Data - 无标签数据13)Labeled Data - 有标签数据14)Semi-supervised Learning - 半监督学习15)Active Learning - 主动学习16)Weakly Supervised Learning - 弱监督学习17)Noisy Label Learning - 噪声标签学习18)Self-training - 自训练19)Crowdsourcing Labeling - 众包标注20)Label Smoothing - 标签平滑化•Prediction - 预测1)Prediction - 预测2)Forecasting - 预测3)Regression - 回归4)Classification - 分类5)Time Series Prediction - 时间序列预测6)Forecast Accuracy - 预测准确性7)Predictive Modeling - 预测建模8)Predictive Analytics - 预测分析9)Forecasting Method - 预测方法10)Predictive Performance - 预测性能11)Predictive Power - 预测能力12)Prediction Error - 预测误差13)Prediction Interval - 预测区间14)Prediction Model - 预测模型15)Predictive Uncertainty - 预测不确定性16)Forecast Horizon - 预测时间跨度17)Predictive Maintenance - 预测性维护18)Predictive Policing - 预测式警务19)Predictive Healthcare - 预测性医疗20)Predictive Maintenance - 预测性维护•Classification - 分类1)Classification - 分类2)Classifier - 分类器3)Class - 类别4)Classify - 对数据进行分类5)Class Label - 类别标签6)Binary Classification - 二元分类7)Multiclass Classification - 多类分类8)Class Probability - 类别概率9)Decision Boundary - 决策边界10)Decision Tree - 决策树11)Support Vector Machine (SVM) - 支持向量机12)K-Nearest Neighbors (KNN) - K最近邻算法13)Naive Bayes - 朴素贝叶斯14)Logistic Regression - 逻辑回归15)Random Forest - 随机森林16)Neural Network - 神经网络17)SoftMax Function - SoftMax函数18)One-vs-All (One-vs-Rest) - 一对多(一对剩余)19)Ensemble Learning - 集成学习20)Confusion Matrix - 混淆矩阵•Regression - 回归1)Regression Analysis - 回归分析2)Linear Regression - 线性回归3)Multiple Regression - 多元回归4)Polynomial Regression - 多项式回归5)Logistic Regression - 逻辑回归6)Ridge Regression - 岭回归7)Lasso Regression - Lasso回归8)Elastic Net Regression - 弹性网络回归9)Regression Coefficients - 回归系数10)Residuals - 残差11)Ordinary Least Squares (OLS) - 普通最小二乘法12)Ridge Regression Coefficient - 岭回归系数13)Lasso Regression Coefficient - Lasso回归系数14)Elastic Net Regression Coefficient - 弹性网络回归系数15)Regression Line - 回归线16)Prediction Error - 预测误差17)Regression Model - 回归模型18)Nonlinear Regression - 非线性回归19)Generalized Linear Models (GLM) - 广义线性模型20)Coefficient of Determination (R-squared) - 决定系数21)F-test - F检验22)Homoscedasticity - 同方差性23)Heteroscedasticity - 异方差性24)Autocorrelation - 自相关25)Multicollinearity - 多重共线性26)Outliers - 异常值27)Cross-validation - 交叉验证28)Feature Selection - 特征选择29)Feature Engineering - 特征工程30)Regularization - 正则化2.Neural Networks and Deep Learning (神经网络与深度学习)•Convolutional Neural Network (CNN) - 卷积神经网络1)Convolutional Neural Network (CNN) - 卷积神经网络2)Convolution Layer - 卷积层3)Feature Map - 特征图4)Convolution Operation - 卷积操作5)Stride - 步幅6)Padding - 填充7)Pooling Layer - 池化层8)Max Pooling - 最大池化9)Average Pooling - 平均池化10)Fully Connected Layer - 全连接层11)Activation Function - 激活函数12)Rectified Linear Unit (ReLU) - 线性修正单元13)Dropout - 随机失活14)Batch Normalization - 批量归一化15)Transfer Learning - 迁移学习16)Fine-Tuning - 微调17)Image Classification - 图像分类18)Object Detection - 物体检测19)Semantic Segmentation - 语义分割20)Instance Segmentation - 实例分割21)Generative Adversarial Network (GAN) - 生成对抗网络22)Image Generation - 图像生成23)Style Transfer - 风格迁移24)Convolutional Autoencoder - 卷积自编码器25)Recurrent Neural Network (RNN) - 循环神经网络•Recurrent Neural Network (RNN) - 循环神经网络1)Recurrent Neural Network (RNN) - 循环神经网络2)Long Short-Term Memory (LSTM) - 长短期记忆网络3)Gated Recurrent Unit (GRU) - 门控循环单元4)Sequence Modeling - 序列建模5)Time Series Prediction - 时间序列预测6)Natural Language Processing (NLP) - 自然语言处理7)Text Generation - 文本生成8)Sentiment Analysis - 情感分析9)Named Entity Recognition (NER) - 命名实体识别10)Part-of-Speech Tagging (POS Tagging) - 词性标注11)Sequence-to-Sequence (Seq2Seq) - 序列到序列12)Attention Mechanism - 注意力机制13)Encoder-Decoder Architecture - 编码器-解码器架构14)Bidirectional RNN - 双向循环神经网络15)Teacher Forcing - 强制教师法16)Backpropagation Through Time (BPTT) - 通过时间的反向传播17)Vanishing Gradient Problem - 梯度消失问题18)Exploding Gradient Problem - 梯度爆炸问题19)Language Modeling - 语言建模20)Speech Recognition - 语音识别•Long Short-Term Memory (LSTM) - 长短期记忆网络1)Long Short-Term Memory (LSTM) - 长短期记忆网络2)Cell State - 细胞状态3)Hidden State - 隐藏状态4)Forget Gate - 遗忘门5)Input Gate - 输入门6)Output Gate - 输出门7)Peephole Connections - 窥视孔连接8)Gated Recurrent Unit (GRU) - 门控循环单元9)Vanishing Gradient Problem - 梯度消失问题10)Exploding Gradient Problem - 梯度爆炸问题11)Sequence Modeling - 序列建模12)Time Series Prediction - 时间序列预测13)Natural Language Processing (NLP) - 自然语言处理14)Text Generation - 文本生成15)Sentiment Analysis - 情感分析16)Named Entity Recognition (NER) - 命名实体识别17)Part-of-Speech Tagging (POS Tagging) - 词性标注18)Attention Mechanism - 注意力机制19)Encoder-Decoder Architecture - 编码器-解码器架构20)Bidirectional LSTM - 双向长短期记忆网络•Attention Mechanism - 注意力机制1)Attention Mechanism - 注意力机制2)Self-Attention - 自注意力3)Multi-Head Attention - 多头注意力4)Transformer - 变换器5)Query - 查询6)Key - 键7)Value - 值8)Query-Value Attention - 查询-值注意力9)Dot-Product Attention - 点积注意力10)Scaled Dot-Product Attention - 缩放点积注意力11)Additive Attention - 加性注意力12)Context Vector - 上下文向量13)Attention Score - 注意力分数14)SoftMax Function - SoftMax函数15)Attention Weight - 注意力权重16)Global Attention - 全局注意力17)Local Attention - 局部注意力18)Positional Encoding - 位置编码19)Encoder-Decoder Attention - 编码器-解码器注意力20)Cross-Modal Attention - 跨模态注意力•Generative Adversarial Network (GAN) - 生成对抗网络1)Generative Adversarial Network (GAN) - 生成对抗网络2)Generator - 生成器3)Discriminator - 判别器4)Adversarial Training - 对抗训练5)Minimax Game - 极小极大博弈6)Nash Equilibrium - 纳什均衡7)Mode Collapse - 模式崩溃8)Training Stability - 训练稳定性9)Loss Function - 损失函数10)Discriminative Loss - 判别损失11)Generative Loss - 生成损失12)Wasserstein GAN (WGAN) - Wasserstein GAN(WGAN)13)Deep Convolutional GAN (DCGAN) - 深度卷积生成对抗网络(DCGAN)14)Conditional GAN (c GAN) - 条件生成对抗网络(c GAN)15)Style GAN - 风格生成对抗网络16)Cycle GAN - 循环生成对抗网络17)Progressive Growing GAN (PGGAN) - 渐进式增长生成对抗网络(PGGAN)18)Self-Attention GAN (SAGAN) - 自注意力生成对抗网络(SAGAN)19)Big GAN - 大规模生成对抗网络20)Adversarial Examples - 对抗样本•Encoder-Decoder - 编码器-解码器1)Encoder-Decoder Architecture - 编码器-解码器架构2)Encoder - 编码器3)Decoder - 解码器4)Sequence-to-Sequence Model (Seq2Seq) - 序列到序列模型5)State Vector - 状态向量6)Context Vector - 上下文向量7)Hidden State - 隐藏状态8)Attention Mechanism - 注意力机制9)Teacher Forcing - 强制教师法10)Beam Search - 束搜索11)Recurrent Neural Network (RNN) - 循环神经网络12)Long Short-Term Memory (LSTM) - 长短期记忆网络13)Gated Recurrent Unit (GRU) - 门控循环单元14)Bidirectional Encoder - 双向编码器15)Greedy Decoding - 贪婪解码16)Masking - 遮盖17)Dropout - 随机失活18)Embedding Layer - 嵌入层19)Cross-Entropy Loss - 交叉熵损失20)Tokenization - 令牌化•Transfer Learning - 迁移学习1)Transfer Learning - 迁移学习2)Source Domain - 源领域3)Target Domain - 目标领域4)Fine-Tuning - 微调5)Domain Adaptation - 领域自适应6)Pre-Trained Model - 预训练模型7)Feature Extraction - 特征提取8)Knowledge Transfer - 知识迁移9)Unsupervised Domain Adaptation - 无监督领域自适应10)Semi-Supervised Domain Adaptation - 半监督领域自适应11)Multi-Task Learning - 多任务学习12)Data Augmentation - 数据增强13)Task Transfer - 任务迁移14)Model Agnostic Meta-Learning (MAML) - 与模型无关的元学习(MAML)15)One-Shot Learning - 单样本学习16)Zero-Shot Learning - 零样本学习17)Few-Shot Learning - 少样本学习18)Knowledge Distillation - 知识蒸馏19)Representation Learning - 表征学习20)Adversarial Transfer Learning - 对抗迁移学习•Pre-trained Models - 预训练模型1)Pre-trained Model - 预训练模型2)Transfer Learning - 迁移学习3)Fine-Tuning - 微调4)Knowledge Transfer - 知识迁移5)Domain Adaptation - 领域自适应6)Feature Extraction - 特征提取7)Representation Learning - 表征学习8)Language Model - 语言模型9)Bidirectional Encoder Representations from Transformers (BERT) - 双向编码器结构转换器10)Generative Pre-trained Transformer (GPT) - 生成式预训练转换器11)Transformer-based Models - 基于转换器的模型12)Masked Language Model (MLM) - 掩蔽语言模型13)Cloze Task - 填空任务14)Tokenization - 令牌化15)Word Embeddings - 词嵌入16)Sentence Embeddings - 句子嵌入17)Contextual Embeddings - 上下文嵌入18)Self-Supervised Learning - 自监督学习19)Large-Scale Pre-trained Models - 大规模预训练模型•Loss Function - 损失函数1)Loss Function - 损失函数2)Mean Squared Error (MSE) - 均方误差3)Mean Absolute Error (MAE) - 平均绝对误差4)Cross-Entropy Loss - 交叉熵损失5)Binary Cross-Entropy Loss - 二元交叉熵损失6)Categorical Cross-Entropy Loss - 分类交叉熵损失7)Hinge Loss - 合页损失8)Huber Loss - Huber损失9)Wasserstein Distance - Wasserstein距离10)Triplet Loss - 三元组损失11)Contrastive Loss - 对比损失12)Dice Loss - Dice损失13)Focal Loss - 焦点损失14)GAN Loss - GAN损失15)Adversarial Loss - 对抗损失16)L1 Loss - L1损失17)L2 Loss - L2损失18)Huber Loss - Huber损失19)Quantile Loss - 分位数损失•Activation Function - 激活函数1)Activation Function - 激活函数2)Sigmoid Function - Sigmoid函数3)Hyperbolic Tangent Function (Tanh) - 双曲正切函数4)Rectified Linear Unit (Re LU) - 矩形线性单元5)Parametric Re LU (P Re LU) - 参数化Re LU6)Exponential Linear Unit (ELU) - 指数线性单元7)Swish Function - Swish函数8)Softplus Function - Soft plus函数9)Softmax Function - SoftMax函数10)Hard Tanh Function - 硬双曲正切函数11)Softsign Function - Softsign函数12)GELU (Gaussian Error Linear Unit) - GELU(高斯误差线性单元)13)Mish Function - Mish函数14)CELU (Continuous Exponential Linear Unit) - CELU(连续指数线性单元)15)Bent Identity Function - 弯曲恒等函数16)Gaussian Error Linear Units (GELUs) - 高斯误差线性单元17)Adaptive Piecewise Linear (APL) - 自适应分段线性函数18)Radial Basis Function (RBF) - 径向基函数•Backpropagation - 反向传播1)Backpropagation - 反向传播2)Gradient Descent - 梯度下降3)Partial Derivative - 偏导数4)Chain Rule - 链式法则5)Forward Pass - 前向传播6)Backward Pass - 反向传播7)Computational Graph - 计算图8)Neural Network - 神经网络9)Loss Function - 损失函数10)Gradient Calculation - 梯度计算11)Weight Update - 权重更新12)Activation Function - 激活函数13)Optimizer - 优化器14)Learning Rate - 学习率15)Mini-Batch Gradient Descent - 小批量梯度下降16)Stochastic Gradient Descent (SGD) - 随机梯度下降17)Batch Gradient Descent - 批量梯度下降18)Momentum - 动量19)Adam Optimizer - Adam优化器20)Learning Rate Decay - 学习率衰减•Gradient Descent - 梯度下降1)Gradient Descent - 梯度下降2)Stochastic Gradient Descent (SGD) - 随机梯度下降3)Mini-Batch Gradient Descent - 小批量梯度下降4)Batch Gradient Descent - 批量梯度下降5)Learning Rate - 学习率6)Momentum - 动量7)Adaptive Moment Estimation (Adam) - 自适应矩估计8)RMSprop - 均方根传播9)Learning Rate Schedule - 学习率调度10)Convergence - 收敛11)Divergence - 发散12)Adagrad - 自适应学习速率方法13)Adadelta - 自适应增量学习率方法14)Adamax - 自适应矩估计的扩展版本15)Nadam - Nesterov Accelerated Adaptive Moment Estimation16)Learning Rate Decay - 学习率衰减17)Step Size - 步长18)Conjugate Gradient Descent - 共轭梯度下降19)Line Search - 线搜索20)Newton's Method - 牛顿法•Learning Rate - 学习率1)Learning Rate - 学习率2)Adaptive Learning Rate - 自适应学习率3)Learning Rate Decay - 学习率衰减4)Initial Learning Rate - 初始学习率5)Step Size - 步长6)Momentum - 动量7)Exponential Decay - 指数衰减8)Annealing - 退火9)Cyclical Learning Rate - 循环学习率10)Learning Rate Schedule - 学习率调度11)Warm-up - 预热12)Learning Rate Policy - 学习率策略13)Learning Rate Annealing - 学习率退火14)Cosine Annealing - 余弦退火15)Gradient Clipping - 梯度裁剪16)Adapting Learning Rate - 适应学习率17)Learning Rate Multiplier - 学习率倍增器18)Learning Rate Reduction - 学习率降低19)Learning Rate Update - 学习率更新20)Scheduled Learning Rate - 定期学习率•Batch Size - 批量大小1)Batch Size - 批量大小2)Mini-Batch - 小批量3)Batch Gradient Descent - 批量梯度下降4)Stochastic Gradient Descent (SGD) - 随机梯度下降5)Mini-Batch Gradient Descent - 小批量梯度下降6)Online Learning - 在线学习7)Full-Batch - 全批量8)Data Batch - 数据批次9)Training Batch - 训练批次10)Batch Normalization - 批量归一化11)Batch-wise Optimization - 批量优化12)Batch Processing - 批量处理13)Batch Sampling - 批量采样14)Adaptive Batch Size - 自适应批量大小15)Batch Splitting - 批量分割16)Dynamic Batch Size - 动态批量大小17)Fixed Batch Size - 固定批量大小18)Batch-wise Inference - 批量推理19)Batch-wise Training - 批量训练20)Batch Shuffling - 批量洗牌•Epoch - 训练周期1)Training Epoch - 训练周期2)Epoch Size - 周期大小3)Early Stopping - 提前停止4)Validation Set - 验证集5)Training Set - 训练集6)Test Set - 测试集7)Overfitting - 过拟合8)Underfitting - 欠拟合9)Model Evaluation - 模型评估10)Model Selection - 模型选择11)Hyperparameter Tuning - 超参数调优12)Cross-Validation - 交叉验证13)K-fold Cross-Validation - K折交叉验证14)Stratified Cross-Validation - 分层交叉验证15)Leave-One-Out Cross-Validation (LOOCV) - 留一法交叉验证16)Grid Search - 网格搜索17)Random Search - 随机搜索18)Model Complexity - 模型复杂度19)Learning Curve - 学习曲线20)Convergence - 收敛3.Machine Learning Techniques and Algorithms (机器学习技术与算法)•Decision Tree - 决策树1)Decision Tree - 决策树2)Node - 节点3)Root Node - 根节点4)Leaf Node - 叶节点5)Internal Node - 内部节点6)Splitting Criterion - 分裂准则7)Gini Impurity - 基尼不纯度8)Entropy - 熵9)Information Gain - 信息增益10)Gain Ratio - 增益率11)Pruning - 剪枝12)Recursive Partitioning - 递归分割13)CART (Classification and Regression Trees) - 分类回归树14)ID3 (Iterative Dichotomiser 3) - 迭代二叉树315)C4.5 (successor of ID3) - C4.5(ID3的后继者)16)C5.0 (successor of C4.5) - C5.0(C4.5的后继者)17)Split Point - 分裂点18)Decision Boundary - 决策边界19)Pruned Tree - 剪枝后的树20)Decision Tree Ensemble - 决策树集成•Random Forest - 随机森林1)Random Forest - 随机森林2)Ensemble Learning - 集成学习3)Bootstrap Sampling - 自助采样4)Bagging (Bootstrap Aggregating) - 装袋法5)Out-of-Bag (OOB) Error - 袋外误差6)Feature Subset - 特征子集7)Decision Tree - 决策树8)Base Estimator - 基础估计器9)Tree Depth - 树深度10)Randomization - 随机化11)Majority Voting - 多数投票12)Feature Importance - 特征重要性13)OOB Score - 袋外得分14)Forest Size - 森林大小15)Max Features - 最大特征数16)Min Samples Split - 最小分裂样本数17)Min Samples Leaf - 最小叶节点样本数18)Gini Impurity - 基尼不纯度19)Entropy - 熵20)Variable Importance - 变量重要性•Support Vector Machine (SVM) - 支持向量机1)Support Vector Machine (SVM) - 支持向量机2)Hyperplane - 超平面3)Kernel Trick - 核技巧4)Kernel Function - 核函数5)Margin - 间隔6)Support Vectors - 支持向量7)Decision Boundary - 决策边界8)Maximum Margin Classifier - 最大间隔分类器9)Soft Margin Classifier - 软间隔分类器10) C Parameter - C参数11)Radial Basis Function (RBF) Kernel - 径向基函数核12)Polynomial Kernel - 多项式核13)Linear Kernel - 线性核14)Quadratic Kernel - 二次核15)Gaussian Kernel - 高斯核16)Regularization - 正则化17)Dual Problem - 对偶问题18)Primal Problem - 原始问题19)Kernelized SVM - 核化支持向量机20)Multiclass SVM - 多类支持向量机•K-Nearest Neighbors (KNN) - K-最近邻1)K-Nearest Neighbors (KNN) - K-最近邻2)Nearest Neighbor - 最近邻3)Distance Metric - 距离度量4)Euclidean Distance - 欧氏距离5)Manhattan Distance - 曼哈顿距离6)Minkowski Distance - 闵可夫斯基距离7)Cosine Similarity - 余弦相似度8)K Value - K值9)Majority Voting - 多数投票10)Weighted KNN - 加权KNN11)Radius Neighbors - 半径邻居12)Ball Tree - 球树13)KD Tree - KD树14)Locality-Sensitive Hashing (LSH) - 局部敏感哈希15)Curse of Dimensionality - 维度灾难16)Class Label - 类标签17)Training Set - 训练集18)Test Set - 测试集19)Validation Set - 验证集20)Cross-Validation - 交叉验证•Naive Bayes - 朴素贝叶斯1)Naive Bayes - 朴素贝叶斯2)Bayes' Theorem - 贝叶斯定理3)Prior Probability - 先验概率4)Posterior Probability - 后验概率5)Likelihood - 似然6)Class Conditional Probability - 类条件概率7)Feature Independence Assumption - 特征独立假设8)Multinomial Naive Bayes - 多项式朴素贝叶斯9)Gaussian Naive Bayes - 高斯朴素贝叶斯10)Bernoulli Naive Bayes - 伯努利朴素贝叶斯11)Laplace Smoothing - 拉普拉斯平滑12)Add-One Smoothing - 加一平滑13)Maximum A Posteriori (MAP) - 最大后验概率14)Maximum Likelihood Estimation (MLE) - 最大似然估计15)Classification - 分类16)Feature Vectors - 特征向量17)Training Set - 训练集18)Test Set - 测试集19)Class Label - 类标签20)Confusion Matrix - 混淆矩阵•Clustering - 聚类1)Clustering - 聚类2)Centroid - 质心3)Cluster Analysis - 聚类分析4)Partitioning Clustering - 划分式聚类5)Hierarchical Clustering - 层次聚类6)Density-Based Clustering - 基于密度的聚类7)K-Means Clustering - K均值聚类8)K-Medoids Clustering - K中心点聚类9)DBSCAN (Density-Based Spatial Clustering of Applications with Noise) - 基于密度的空间聚类算法10)Agglomerative Clustering - 聚合式聚类11)Dendrogram - 系统树图12)Silhouette Score - 轮廓系数13)Elbow Method - 肘部法则14)Clustering Validation - 聚类验证15)Intra-cluster Distance - 类内距离16)Inter-cluster Distance - 类间距离17)Cluster Cohesion - 类内连贯性18)Cluster Separation - 类间分离度19)Cluster Assignment - 聚类分配20)Cluster Label - 聚类标签•K-Means - K-均值1)K-Means - K-均值2)Centroid - 质心3)Cluster - 聚类4)Cluster Center - 聚类中心5)Cluster Assignment - 聚类分配6)Cluster Analysis - 聚类分析7)K Value - K值8)Elbow Method - 肘部法则9)Inertia - 惯性10)Silhouette Score - 轮廓系数11)Convergence - 收敛12)Initialization - 初始化13)Euclidean Distance - 欧氏距离14)Manhattan Distance - 曼哈顿距离15)Distance Metric - 距离度量16)Cluster Radius - 聚类半径17)Within-Cluster Variation - 类内变异18)Cluster Quality - 聚类质量19)Clustering Algorithm - 聚类算法20)Clustering Validation - 聚类验证•Dimensionality Reduction - 降维1)Dimensionality Reduction - 降维2)Feature Extraction - 特征提取3)Feature Selection - 特征选择4)Principal Component Analysis (PCA) - 主成分分析5)Singular Value Decomposition (SVD) - 奇异值分解6)Linear Discriminant Analysis (LDA) - 线性判别分析7)t-Distributed Stochastic Neighbor Embedding (t-SNE) - t-分布随机邻域嵌入8)Autoencoder - 自编码器9)Manifold Learning - 流形学习10)Locally Linear Embedding (LLE) - 局部线性嵌入11)Isomap - 等度量映射12)Uniform Manifold Approximation and Projection (UMAP) - 均匀流形逼近与投影13)Kernel PCA - 核主成分分析14)Non-negative Matrix Factorization (NMF) - 非负矩阵分解15)Independent Component Analysis (ICA) - 独立成分分析16)Variational Autoencoder (VAE) - 变分自编码器17)Sparse Coding - 稀疏编码18)Random Projection - 随机投影19)Neighborhood Preserving Embedding (NPE) - 保持邻域结构的嵌入20)Curvilinear Component Analysis (CCA) - 曲线成分分析•Principal Component Analysis (PCA) - 主成分分析1)Principal Component Analysis (PCA) - 主成分分析2)Eigenvector - 特征向量3)Eigenvalue - 特征值4)Covariance Matrix - 协方差矩阵。

对深度学习的认识英文作文1. Deep learning is an incredibly powerful tool in the field of artificial intelligence. It allows machines to learn and make decisions in a way that is similar to how humans do. By analyzing and processing large amounts of data, deep learning algorithms can identify patterns and make predictions, leading to breakthroughs in various industries.2. One of the key features of deep learning is its ability to automatically extract features from raw data. This means that instead of relying on handcrafted features, deep learning models can learn directly from the data itself. This not only saves time and effort but also allows for more accurate and robust models.3. Deep learning models are often built using neural networks, which are inspired by the structure and function of the human brain. These networks consist of interconnected layers of artificial neurons that processand transmit information. By adjusting the weights and biases of these neurons, the network can learn and improve its performance over time.4. Another advantage of deep learning is its ability to handle unstructured data. Traditional machine learning algorithms often struggle with data such as images, audio, and text, as they require manual feature engineering. Deep learning, on the other hand, can directly process raw data and extract meaningful information, making it well-suitedfor tasks like image recognition, speech recognition, and natural language processing.5. However, deep learning also has its limitations. One major challenge is the need for large amounts of labeled data to train the models effectively. This can be a time-consuming and expensive process, especially in domainswhere obtaining labeled data is difficult or costly. Additionally, deep learning models can be computationally intensive and require powerful hardware to train and deploy.6. Despite these challenges, deep learning has alreadymade significant contributions in various fields. It has revolutionized computer vision, enabling machines to recognize objects, faces, and even emotions in images and videos. It has also improved speech recognition systems, making voice assistants like Siri and Alexa more accurate and responsive.7. Looking ahead, the potential applications of deep learning are vast. It has the potential to transform healthcare by aiding in the diagnosis of diseases and the development of personalized treatment plans. It can also enhance autonomous vehicles, making them safer and more efficient. The possibilities are endless, and as researchers continue to push the boundaries of deep learning, we can expect even more exciting advancements in the future.8. In conclusion, deep learning is a game-changing technology that has the potential to revolutionize many industries. Its ability to automatically extract features, handle unstructured data, and learn from large amounts of data make it a powerful tool in the field of artificialintelligence. While there are challenges to overcome, the future of deep learning looks promising, and we can expect to see even more groundbreaking applications in the years to come.。

DeepLearning论⽂翻译(NatureDeepReview)原论⽂出处:by Yann LeCun, Yoshua Bengio & Geoffrey HintonNature volume521, pages436–444 (28 May 2015)译者:零楚L()这篇论⽂性质为深度学习的综述,原本只是想做做笔记,但找到的翻译都不怎么通顺。

既然要啃原⽂献,索性就做个翻译,尽⼒准确通畅。

转载使⽤请注明本⽂出处,当然实在不注明我也并没有什么办法。

论⽂中⼤量使⽤貌似作者默认术语但⼜难以赋予合适中⽂意义或会造成歧义的词语及其在本⽂中将采⽤的固定翻译有:representation-“特征描述”objective function/objective-“误差函数/误差” 本意该是⽬标函数/评价函数但实际应⽤中都是使⽤的cost function+正则化项作为⽬标函数(参考链接:)所以本⽂直接将其意为误差函数便于理解的同时并不会影响理解的正确性这种量的翻译写下⾯这句话会不会太⾃⼤了点,不过应该也没关系吧。

看过这么多书⾥接受批评指正的谦辞,张宇⽼师版本的最为印象深刻:我⽆意以“⽔平有限”为遁词,诚⼼接受批评指正。

那么,让我们开始这⼀切吧。

Nature Deep Review摘要:深度学习能让那些具有多个处理层的计算模型学习如何表⽰⾼度抽象的数据。

这些⽅法显著地促进了⼀些领域最前沿技术的发展,包括语⾳识别,视觉对象识别,对象检测和如药物鉴定、基因组学等其它很多领域。

深度学习使⽤⼀种后向传播算法(BackPropagation algorithm,通常称为BP算法)来指导机器如何让每个处理层根据它们⾃⾝前⾯的处理层的“特征描述”改变⽤于计算本层“特征描述”的参数,由此深度学习可以发现所给数据集的复杂结构。

深度卷积⽹络为图像、视频、语⾳和⾳频的处理领域都带来了突破,⽽递归⽹络(或更多译为循环神经⽹络)则在处理⽂本、语⾳等序列数据⽅⾯展现出潜⼒。

英语六级翻译必背动词今天为大家及时带来英语四六级考试相关资讯及备考技巧。

也为大家整理了大学英语六级翻译高频词汇,一起来看看吧!一、动词(18组)1. 强调stress/emphasize/underline例句:他们强调,人们应当读好书,尤其是经典著作。

They emphasize that people should read good books, especially the classics.2. 象征symbolize/signify/stand for例句:在中国文化中,红色通常象征着好运、长寿和幸福。

Red usually symbolizes fortune, longevity and happiness in Chinese culture.3. 变成、改变、演变become/change into/develop to/evolve into例句:珠江三角洲已成为中国和世界主要经济区域之一。

The Pearl River Delta has become one of the main economic zones in China and the world.4. 考虑consider/think about/take…into account/think over例句:但是好的烹饪都有一个共同点,总是要考虑到颜色、味道、口感和营养。

But good cooking shares one thing in common—always taking color,flavor, taste and nutrition into account.5. 举办、举行hold/conduct例句:今年在长沙举行了一年一度的外国人汉语演讲比赛。

The annual Chinese speech contest for foreigners was held in Changsha this year.6. 使用、利用use/utilize/make use of例句:核能是可以安全开发和利用的。

深度学习论⽂翻译解析(六):MobileNets:EfficientConvolution。

论⽂标题:MobileNets:Efficient Convolutional Neural Networks for Mobile Vision Appliications论⽂作者:Andrew G.Howard Menglong Zhu Bo Chen .....代码地址:声明:⼩编翻译论⽂仅为学习,如有侵权请联系⼩编删除博⽂,谢谢!⼩编是⼀个机器学习初学者,打算认真学习论⽂,但是英⽂⽔平有限,所以论⽂翻译中⽤到了Google,并⾃⼰逐句检查过,但还是会有显得晦涩的地⽅,如有语法/专业名词翻译错误,还请见谅,并欢迎及时指出。

如果需要⼩编其他论⽂翻译,请移步⼩编的GitHub地址 传送门:摘要 我们提出⼀个在移动端和嵌⼊式应⽤⾼效的分类模型叫做MobileNets,MobileNets基于流线型架构(streamlined),使⽤深度可分类卷积(depthwise separable convolutions,即Xception 变体结构)来构建轻量级深度神经⽹络。

我们介绍两个简单的全局超参数,可有效的在延迟和准确率之间做折中。

这些超参数允许我们依据约束条件选择合适⼤⼩的模型。

论⽂测试在多个参数量下做了⼴泛的实验,并在ImageNet分类任务上与其他先进模型做了对⽐,显⽰了强⼤的性能。

论⽂验证了模型在其他领域(对象检测,⼈脸识别,⼤规模地理定位等)使⽤的有效性。

1,引⾔ ⾃从AlexNet [19]通过赢得ImageNet挑战:ILSVRC 2012 [24]来推⼴深度卷积神经⽹络以来,卷积神经⽹络就已经在计算机视觉中变得⽆处不在。

总的趋势是建⽴更深,更复杂的⽹络以实现更⾼的准确性[27、31、29、8]。

但是,这些提⾼准确性的进步并不⼀定会使⽹络在⼤⼩和速度⽅⾯更加⾼效。

在许多现实世界的应⽤程序中,例如机器⼈技术,⾃动驾驶汽车和增强现实,识别任务需要在计算受限的平台上及时执⾏。

deep learning英语作文English:Deep learning is a subset of machine learning, which is a type of artificial intelligence (AI) that involves the use of neural networks to interpret data. These neural networks are designed to simulate the way a human brain operates, capable of processing and learning from large sets of input data to make complex decisions. Deep learning algorithms are able to automatically detect patterns and features within the data, making it particularly useful in fields such as image and speech recognition, natural language processing, and autonomous vehicles. One of the key advantages of deep learning is its ability to continuously improve its performance through exposure to more data, making it a powerful tool for solving complex problems in various industries.中文翻译:深度学习是机器学习的子集,是一种涉及使用神经网络来解释数据的人工智能(AI)类型。

原文摘要:深度学习可以让那些拥有多个处理层的计算模型来学习具有多层次抽象的数据的表示。

这些方法在许多方面都带来了显著的改善,包括最先进的语音识别、视觉对象识别、对象检测和许多其它领域,例如药物发现和基因组学等。

深度学习能够发现大数据中的复杂结构。

它是利用BP算法来完成这个发现过程的。

BP算法能够指导机器如何从前一层获取误差而改变本层的内部参数,这些内部参数可以用于计算表示。

深度卷积网络在处理图像、视频、语音和音频方面带来了突破,而递归网络在处理序列数据,比如文本和语音方面表现出了闪亮的一面。

机器学习技术在现代社会的各个方面表现出了强大的功能:从Web搜索到社会网络内容过滤,再到电子商务网站上的商品推荐都有涉足。

并且它越来越多地出现在消费品中,比如相机和智能手机。

机器学习系统被用来识别图片中的目标,将语音转换成文本,匹配新闻元素,根据用户兴趣提供职位或产品,选择相关的搜索结果。

逐渐地,这些应用使用一种叫深度学习的技术。

传统的机器学习技术在处理未加工过的数据时,体现出来的能力是有限的。

几十年来,想要构建一个模式识别系统或者机器学习系统,需要一个精致的引擎和相当专业的知识来设计一个特征提取器,把原始数据(如图像的像素值)转换成一个适当的内部特征表示或特征向量,子学习系统,通常是一个分类器,对输入的样本进行检测或分类。

特征表示学习是一套给机器灌入原始数据,然后能自动发现需要进行检测和分类的表达的方法。

深度学习就是一种特征学习方法,把原始数据通过一些简单的但是非线性的模型转变成为更高层次的,更加抽象的表达。

通过足够多的转换的组合,非常复杂的函数也可以被学习。

对于分类任务,高层次的表达能够强化输入数据的区分能力方面,同时削弱不相关因素。

比如,一副图像的原始格式是一个像素数组,那么在第一层上的学习特征表达通常指的是在图像的特定位置和方向上有没有边的存在。

第二层通常会根据那些边的某些排放而来检测图案,这时候会忽略掉一些边上的一些小的干扰。

第三层或许会把那些图案进行组合,从而使其对应于熟悉目标的某部分。

随后的一些层会将这些部分再组合,从而构成待检测目标。

深度学习的核心方面是,上述各层的特征都不是利用人工工程来设计的,而是使用一种通用的学习过程从数据中学到的。

深度学习正在取得重大进展,解决了人工智能界的尽最大努力很多年仍没有进展的问题。

它已经被证明,它能够擅长发现高维数据中的复杂结构,因此它能够被应用于科学、商业和政府等领域。

除了在图像识别、语音识别等领域打破了纪录,它还在另外的领域击败了其他机器学习技术,包括预测潜在的药物分子的活性、分析粒子加速器数据、重建大脑回路、预测在非编码DNA突变对基因表达和疾病的影响。

也许更令人惊讶的是,深度学习在自然语言理解的各项任务中产生了非常可喜的成果,特别是主题分类、情感分析、自动问答和语言翻译。

我们认为,在不久的将来,深度学习将会取得更多的成功,因为它需要很少的手工工程,它可以很容易受益于可用计算能力和数据量的增加。

目前正在为深度神经网络开发的新的学习算法和架构只会加速这一进程。

监督学习机器学习中,不论是否是深层,最常见的形式是监督学习。

试想一下,我们要建立一个系统,它能够对一个包含了一座房子、一辆汽车、一个人或一个宠物的图像进行分类。

我们先收集大量的房子,汽车,人与宠物的图像的数据集,并对每个对象标上它的类别。

在训练期间,机器会获取一副图片,然后产生一个输出,这个输出以向量形式的分数来表示,每个类别都有一个这样的向量。

我们希望所需的类别在所有的类别中具有最高的得分,但是这在训练之前是不太可能发生的。

通过计算一个目标函数可以获得输出分数和期望模式分数之间的误差(或距离)。

然后机器会修改其内部可调参数,以减少这种误差。

这些可调节的参数,通常被称为权值,它们是一些实数,可以被看作是一些“旋钮”,定义了机器的输入输出功能。

在典型的深学习系统中,有可能有数以百万计的样本和权值,和带有标签的样本,用来训练机器。

为了正确地调整权值向量,该学习算法计算每个权值的梯度向量,表示了如果权值增加了一个很小的量,那么误差会增加或减少的量。

权值向量然后在梯度矢量的相反方向上进行调整。

我们的目标函数,所有训练样本的平均,可以被看作是一种在权值的高维空间上的多变地形。

负的梯度矢量表示在该地形中下降方向最快,使其更接近于最小值,也就是平均输出误差低最低的地方。

在实际应用中,大部分从业者都使用一种称作随机梯度下降的算法(SGD)。

它包含了提供一些输入向量样本,计算输出和误差,计算这些样本的平均梯度,然后相应的调整权值。

通过提供小的样本集合来重复这个过程用以训练网络,直到目标函数停止增长。

它被称为随机的是因为小的样本集对于全体样本的平均梯度来说会有噪声估计。

这个简单过程通常会找到一组不错的权值,同其他精心设计的优化技术相比,它的速度让人惊奇。

训练结束之后,系统会通过不同的数据样本——测试集来显示系统的性能。

这用于测试机器的泛化能力——对于未训练过的新样本的识别能力。

当前应用中的许多机器学习技术使用的是线性分类器来对人工提取的特征进行分类。

一个2类线性分类器会计算特征向量的加权和。

当加权和超过一个阈值之后,输入样本就会被分配到一个特定的类别中。

从20世纪60年代开始,我们就知道了线性分类器只能够把样本分成非常简单的区域,也就是说通过一个超平面把空间分成两部分。

但像图像和语音识别等问题,它们需要的输入-输出函数要对输入样本中不相关因素的变化不要过于的敏感,如位置的变化,目标的方向或光照,或者语音中音调或语调的变化等,但是需要对于一些特定的微小变化非常敏感(例如,一只白色的狼和跟狼类似的白色狗——萨莫耶德犬之间的差异)。

在像素这一级别上,两条萨莫耶德犬在不同的姿势和在不同的环境下的图像可以说差异是非常大的,然而,一只萨摩耶德犬和一只狼在相同的位置并在相似背景下的两个图像可能就非常类似。

图1 多层神经网络和BP算法1.多层神经网络(用连接点表示)可以对输入空间进行整合,使得数据(红色和蓝色线表示的样本)线性可分。

注意输入空间中的规则网格(左侧)是如何被隐藏层转换的(转换后的在右侧)。

这个例子中只用了两个输入节点,两个隐藏节点和一个输出节点,但是用于目标识别或自然语言处理的网络通常包含数十个或者数百个这样的节点。

获得C.Olah*单个隐藏层的意义隐藏层的意义就是把输入数据的特征,抽象到另一个维度空间,来展现其更抽象化的特征,这些特征能更好的进行线性划分*2.(http://colah.github.io/)的许可后重新构建的这个图。

3.链式法则告诉我们两个小的变化(x和y的微小变化,以及y和z的微小变化)是怎样组织到一起的。

x的微小变化量Δx首先会通过乘以∂y/∂x(偏导数)转变成y的变化量Δy。

类似的,Δy会给z带来改变Δz。

通过链式法则可以将一个方程转化到另外的一个——也就是Δx通过乘以∂y/∂x和∂z/∂y(英文原文为∂z/∂x,系笔误——编辑注)得到Δz的过程。

当x,y,z是向量的时候,可以同样处理(使用雅克比矩阵)。

4.具有两个隐层一个输出层的神经网络中计算前向传播的公式。

每个都有一个模块构成,用于反向传播梯度。

在每一层上,我们首先计算每个节点的总输入z,z是前一层输出的加权和。

然后利用一个非线性函数f(.)来计算节点的输出。

简单期间,我们忽略掉了阈值项。

神经网络中常用的非线性函数包括了最近几年常用的校正线性单元(ReLU)f(z) = max(0,z),和更多传统sigmoid函数,比如双曲线正切函数f(z) = (exp(z) − exp(−z))/(exp(z) +exp(−z)) 和logistic函数f(z) = 1/(1 + exp(−z))。

计算反向传播的公式。

在隐层,我们计算每个输出单元产生的误差,这是由上一层产生的误差的加权和。

然后我们将输出层的误差通过乘以梯度f(z)转换到输入层。

在输出层上,每个节点的误差会用成本函数的微分来计算。

如果节点l的成本函数是0.5*(yl-tl)^2, 那么节点的误差就是yl-tl,其中tl是期望值。

一旦知道了∂E/∂zk的值,节点j的内星权向量wjk就可以通过yj ∂E/∂zk来进行调整。

一个线性分类器或者其他操作在原始像素上的浅层分类器不能够区分后两者,虽然能够将前者归为同一类。

这就是为什么浅分类要求有良好的特征提取器用于解决选择性不变性困境——提取器会挑选出图像中能够区分目标的那些重要因素,但是这些因素对于分辨动物的位置就无能为力了。

为了加强分类能力,可以使用泛化的非线性特性,如核方法,但这些泛化特征,比如通过高斯核得到的,并不能够使得学习器从学习样本中产生较好的泛化效果。

传统的方法是手工设计良好的特征提取器,这需要大量的工程技术和专业领域知识。

但是如果通过使用通用学习过程而得到良好的特征,那么这些都是可以避免的了。

这就是深度学习的关键优势。

深度学习的体系结构是简单模块的多层栈,所有(或大部分)模块的目标是学习,还有许多计算非线性输入输出的映射。

栈中的每个模块将其输入进行转换,以增加表达的可选择性和不变性。

比如说,具有一个5到20层的非线性多层系统能够实现非常复杂的功能,比如输入数据对细节非常敏感——能够区分白狼和萨莫耶德犬,同时又具有强大的抗干扰能力,比如可以忽略掉不同的背景、姿势、光照和周围的物体等。

反向传播来训练多层神经网络在最早期的模式识别任务中,研究者的目标一直是使用可以训练的多层网络来替代经过人工选择的特征,虽然使用多层神经网络很简单,但是得出来的解很糟糕。

直到20世纪80年代,使用简单的随机梯度下降来训练多层神经网络,这种糟糕的情况才有所改变。

只要网络的输入和内部权值之间的函数相对平滑,使用梯度下降就凑效,梯度下降方法是在70年代到80年代期间由不同的研究团队独立发明的。

用来求解目标函数关于多层神经网络权值梯度的反向传播算法(BP)只是一个用来求导的链式法则的具体应用而已。

反向传播算法的核心思想是:目标函数对于某层输入的导数(或者梯度)可以通过向后传播对该层输出(或者下一层输入)的导数求得(如图1)。

反向传播算法可以被重复的用于传播梯度通过多层神经网络的每一层:从该多层神经网络的最顶层的输出(也就是改网络产生预测的那一层)一直到该多层神经网络的最底层(也就是被接受外部输入的那一层),一旦这些关于(目标函数对)每层输入的导数求解完,我们就可以求解每一层上面的(目标函数对)权值的梯度了。

很多深度学习的应用都是使用前馈式神经网络(如图1),该神经网络学习一个从固定大小输入(比如输入是一张图)到固定大小输出(例如,到不同类别的概率)的映射。

从第一层到下一层,计算前一层神经元输入数据的权值的和,然后把这个和传给一个非线性激活函数。