simple algebras

- 格式:pdf

- 大小:135.22 KB

- 文档页数:13

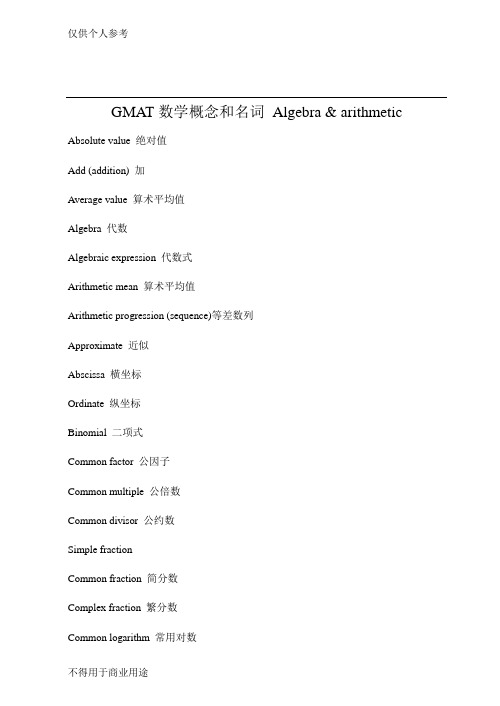

GMAT数学概念和名词Algebra & arithmetic Absolute value 绝对值Add (addition) 加Average value 算术平均值Algebra 代数Algebraic expression 代数式Arithmetic mean 算术平均值Arithmetic progression (sequence)等差数列Approximate 近似Abscissa 横坐标Ordinate 纵坐标Binomial 二项式Common factor 公因子Common multiple 公倍数Common divisor 公约数Simple fractionCommon fraction 简分数Complex fraction 繁分数Common logarithm 常用对数Common ratio 公比Complex number 复数Complex conjugate 复共轭Composite number 合数Prime number 质数Consecutive number 连续整数Consecutive even(odd) integer 连续偶(奇)数Cross multiply 交叉相乘Coefficient 系数Complete quadratic equation 完全二次方程Complementary function 余函数Constant 常数Coordinate system 坐标系Decimal 小数Decimal point 小数点Decimal fraction 纯小数Decimal arithmetic 十进制运算Decimal system/decimal scale 十进制Denominator 分母Difference 差Direct proportion 正比Divide 除Divided evenly 被整除Differential 微分Distinct 不同的Dividend 被除数,红利Division 除法Division sign 除号Divisor 因子,除数Divisible 可被整除的Equivalent fractions 等值分数Equivalent equation 等价方程式Equivalence relation 等价关系Even integer/number 偶数Exponent 指数,幂Equation 方程Equation of the first degree 一次方程Endpoint 端点Estimation 近似Factor 因子Factorable quadratic equation 可因式分解的二次方程Incomplete quadratic equation 不完全二次方程Factorial 阶乘Factorization 因式分解Geometric mean 几何平均数Graph theory 图论Inequality 不等式Improper fraction 假分数Infinite decimal 无穷小数Inverse proportion 反比Irrational number 无理数Infinitesimal calculus 微积分Infinity 无穷大Infinitesimal 无穷小Integerable 可积分的Integral 积分Integral domain 整域Integrand 被积函数Integrating factor 积分因子Inverse function 反函数Inverse/reciprocal 倒数Least common denominator 最小公分母Least common multiple 最小公倍数Literal coefficient 字母系数Like terms 同类项Linear 线*的Minuend 被减数Subtrahend 被减数Mixed decimal 混合小数Mixed number 带分数Minor 子行列式Multiplicand 被乘数Multiplication 乘法Multiplier 乘数Monomial 单项式Mean 平均数Mode 众数Median 中数Negative (positive) number 负(正)数Numerator 分子Null set (empty set) 空集Number theory 数论Number line 数轴Numerical analysis 数值分析Natural logarithm 自然对数Natural number 自然数Nonnegative 非负数Original equation 原方程Ordinary scale 十进制Ordinal 序数Percentage 百分比Parentheses 括号Polynomial 多项式Power 乘方Product 积Proper fraction 真分数Proportion 比例Permutation 排列Proper subset 真子集Prime factor 质因子Progression 数列Quadrant 象限Quadratic equation 二次方程Quarter 四分之一Ratio 比率Real number 实数Round off 四舍五入Round to 四舍五入Root 根Radical sign 根号Root sign 根号Recurring decimal 循环小数Sequence 数列Similar terms 同类项Tens 十位Tenths 十分位Trinomial 三相式Units 个位Unit 单位Weighted average 加权平均值Union 并集Yard 码Whole number 整数Mutually exclusive 互相排斥Independent events 相互独立事件Probability 概率Combination 组合Standard deviation 标准方差Range 值域Frequency distribution 频率分布Domain 定义域Bar graph 柱图Geometry terms:Angle bisector 角平分线Adjacent angle 邻角Alternate angel 内错角Acute angle 锐角Obtuse angle 钝角Bisect 角平分线Adjacent vertices 相邻顶点Arc 弧Altitude 高Arm 直角三角形的股Complex plane 复平面Convex (concave) polygon 凸(凹)多边形Complementary angle 余角Cube 立方体Central angle 圆心角Circle 圆Clockwise 顺时钟方向Counterclockwise 逆时钟方向Chord 弦Circular cylinder 圆柱体Congruent 全等的Corresponding angle 同位角Circumference (perimeter) 周长Concentric circles 同心圆Circle graph 扇面图Cone (V =pai * r^2 * h/3) 圆锥Circumscribe 外切Inscribe 内切Diagonal 对角线Decagon 十边形Hexagon 六边形Nonagon 九边形Octagon 八边形Pentagon 五边形Quadrilateral 四边形Polygon 多边形Diameter 直径Edge 棱Equilateral triangle 等边三角形Exterior (interior) angle 外角/内角Extent 维数Exterior angles on the same side of the transversal同旁外角Hypotenuse 三角形的斜边Intercept 截距Included angle 夹角Intersect 相交Inscribed triangle 内接三角形Isosceles triangle 等腰三角形Midpoint 中点Minor axis 短轴Origin 原点Oblique 斜三角形Plane geometry 平面几何Oblateness (ellipse) 椭圆Parallelogram 平行四边形Parallel lines 平行线Perpendicular 垂直的Pythagorean theorem 勾股定理Pie chart 扇图Quadrihedron 三角锥Radius 半径Rectangle 长方形Regular polygon 正多边形Rhombus 菱形Right circular cylinder 直圆柱体Right triangle 直角三角形Right angle 直角Rectangular solid 正多面体Regular prism 正棱柱Regular pyramid 正棱锥Regular solid/polyhedron 正多面体Slope 斜率Sphere ( surface area=4 pai r^2, V=4 pai r^3 / 3) Side 边长Segment of a circle 弧形Semicircle 半圆Solid 立体Square 正方形,平方Straight angle 平角(180度)Supplementary angle 补角Scalene cylinder 斜柱体Scalene triangle 不等边三角形Trapezoid 梯形V olume 体积Width 宽Vertical angle 对顶角Word problem terms:Apiece 每人Per capita 每人Decrease to 减少到Decrease by 减少了Brace 双Cardinal 基数Cent 美分Nickel 五美分Dime 一角Penny 一美分Down payment 定金,预付金Simple interest 单利Compounded interest 复利Foot 英尺Dozen 打Gross = 12 dozen 罗Gallon = 4 quart 加仑Fahrenheit 华氏温度Depth 深度Discount 折扣Cumulative graph 累计图Interest 利息Margin 利润Profit 利润Retail price 零售价Pint 品脱Score 二十Common year 平年Intercalary year(leap year) 闰年Quarter 夸脱GMA T数学概念和名词大全quartile就是小于median的所有数的median, hehe就是将所有的统计标本按顺序排列,再从头到尾分为个数相同的4堆quartile就是第一堆的最后一个,或是第二堆的第一个题目中,50个数,一定知道median是第25个或第26个同样,quartile是第12或是13个,the third quartile当然是37或是38个至于到底是37还是38,GRE不会为难你的,这两个数肯定一样对Quartile的说明:Quartile(四分位数):第0个Quartile实际为通常所说的最小值(MINimum)第1个Quartile(En:1st Quartile)第2个Quartile实际为通常所说的中分位数(中数、二分位分、中位数:Median)第3个Quartile(En:3rd Quartile)第4个Quartile实际为通常所说的最大值(MAXimum)我想大家除了对1st、3rd Quartile不了解外,对其他几个统计量的求法都是比较熟悉的了,而求1st、3rd是比较麻烦的,下面以求1rd为例:设样本数为n(即共有n个数),可以按下列步骤求1st Quartile:(1)将n个数从小到大排列,求(n-1)/4,设商为i,余数为j(2)则可求得1st Quartile为:(第i+1个数)*(4-j)/4+(第i+2个数)*j/4例(已经排过序啦!):1.设序列为{5},只有一个样本则:(1-1)/4 商0,余数01st=第1个数*4/4+第2个数*0/4=52.设序列为{1,4},有两个样本则:(2-1)/4 商0,余数11st=第1个数*3/4+第2个数*1/4=1.753.设序列为{1,5,7},有三个样本则:(3-1)/4 商0,余数21st=第1个数*2/4+第2个数*2/4=34.设序列为{1,3,6,10},四个样本:(4-1)/4 商0,余数21st=第1个数*1/4+第2个数*3/4=2.55.其他类推!因为3rd与1rd的位置对称,这是可以将序列从大到小排(即倒过来排),再用1rd 的公式即可求得:例(各序列同上各列,只是逆排):1.序列{5},3rd=52.{4,1},3rd=4*3/4+1*1/4=3.253.{7,5,1},3rd=7*2/4+5*2/4=64.{10,6,3,1},3rd=10*1/4+6*3/4=7ETS明确规定Percentile是一定要求的一个统计量,不知道有没有G友遇到过关于Percentile的数学题,因为Percentile的计算比较复杂,所以我在此对Percentile的求法详述,以方便G友:Percentile: percent below用概念来说没什么用,而且易让人糊涂,所以在此我归纳出一个公式以供G友参考。

Trace (linear algebra)In linear algebra, the trace of an n-by-n square matrix A is defined to be the sum of the elements on the main diagonal (the diagonal from the upper left to the lower right) of A, i.e.,where aiirepresents the entry on the i th row and i th column of A. Equivalently, the trace of a matrix is the sum of its eigenvalues, making it an invariant with respect to a change of basis. This characterization can be used to define the trace for a linear operator in general. Note that the trace is only defined for a square matrix (i.e. n×n). Geometrically, the trace can be interpreted as the infinitesimal change in volume (as the derivative of the determinant), which is made precise in Jacobi's formula.The use of the term trace arises from the German term Spur (cognate with the English spoor), which, as a function in mathematics, is often abbreviated to "Sp".ExamplesLet T be a linear operator represented by the matrixThen tr(T) = −2 + 1 − 1 = −2.The trace of the identity matrix is the dimension of the space; this leads to generalizations of dimension using trace. The trace of a projection (i.e., P2 = P) is the rank of the projection. The trace of a nilpotent matrix is zero. The product of a symmetric matrix and a skew-symmetric matrix has zero trace.More generally, if f(x) = (x− λ1)d1···(x− λk)d k is the characteristic polynomial of a matrix A, thenIf A and B are positive semi-definite matrices of the same order then[1]PropertiesThe trace is a linear map. That is,for all square matrices A and B, and all scalars c.If A is an m×n matrix and B is an n×m matrix, then[2]Conversely, the above properties characterize the trace completely in the sense as follows. Let be a linear functional on the space of square matrices satisfying . Then and tr are proportional.[3]The trace is similarity-invariant, which means that A and P−1AP have the same trace. This is becauseA matrix and its transpose have the same trace:.Let A be a symmetric matrix, and B an anti-symmetric matrix. Then.When both A and B are n by n, the trace of the (ring-theoretic) commutator of A and B vanishes: tr([A, B]) = 0; one can state this as "the trace is a map of Lie algebras from operators to scalars", as the commutator of scalars is trivial (it is an abelian Lie algebra). In particular, using similarity invariance, it follows that the identity matrix is never similar to the commutator of any pair of matrices.Conversely, any square matrix with zero trace is the commutator of some pair of matrices.[4] Moreover, any square matrix with zero trace is unitarily equivalent to a square matrix with diagonal consisting of all zeros.The trace of any power of a nilpotent matrix is zero. When the characteristic of the base field is zero, the conversealso holds: if for all , then is nilpotent.Note that order does matter in taking traces: in general,In other words, we can only interchange the two halves of the expression, albeit repeatedly. This means that the trace is invariant under cyclic permutations, i.e.,However, if products of three symmetric matrices are considered, any permutation is allowed. (Proof: tr(ABC) = tr(A T B T C T) = tr((CBA)T) = tr(CBA).) For more than three factors this is not true. This is known as the cyclic property.Unlike the determinant, the trace of the product is not the product of traces. What is true is that the trace of the tensor product of two matrices is the product of their traces:The trace of a product can be rewritten as the sum of all elements from a Hadamard product (entry-wise product):.This should be more computationally efficient, since the matrix product of an matrix with an one(first and last dimensions must match to give a square matrix for the trace) has multiplications and additions, whereas the computation of the Hadamard version (entry-wise product) requires only multiplications followed by additions.The exponential traceExpressions like , where A is a square matrix, occur so often in some fields (e.g. multivariate statistical theory), that a shorthand notation has become common:This is sometimes referred to as the exponential trace function.Trace of a linear operatorGiven some linear map f : V→ V (V is a finite-dimensional vector space) generally, we can define the trace of this map by considering the trace of matrix representation of f, that is, choosing a basis for V and describing f as a matrix relative to this basis, and taking the trace of this square matrix. The result will not depend on the basis chosen, since different bases will give rise to similar matrices, allowing for the possibility of a basis independent definition for the trace of a linear map.Such a definition can be given using the canonical isomorphism between the space End(V) of linear maps on V and V⊗V*, where V* is the dual space of V. Let v be in V and let f be in V*. Then the trace of the decomposable elementv⊗f is defined to be f(v); the trace of a general element is defined by linearity. Using an explicit basis for V and the corresponding dual basis for V*, one can show that this gives the same definition of the trace as given above.Eigenvalue relationshipsIf A is a square n-by-n matrix with real or complex entries and if λ1,...,λnare the (complex and distinct) eigenvaluesof A (listed according to their algebraic multiplicities), thenThis follows from the fact that A is always similar to its Jordan form, an upper triangular matrix having λ1,...,λnonthe main diagonal. In contrast, the determinant of is the product of its eigenvalues; i.e.,More generally,DerivativesThe trace is the derivative of the determinant: it is the Lie algebra analog of the (Lie group) map of the determinant. This is made precise in Jacobi's formula for the derivative of the determinant (see under determinant). As a particular case, : the trace is the derivative of the determinant at the identity. From this (or from the connection between the trace and the eigenvalues), one can derive a connection between the trace function, the exponential map between a Lie algebra and its Lie group (or concretely, the matrix exponential function), and the determinant: det(exp(A)) = exp(tr(A)).For example, consider the one-parameter family of linear transformations given by rotation through angle θ,These transformations all have determinant 1, so they preserve area. The derivative of this family at θ = 0 is the antisymmetric matrixwhich clearly has trace zero, indicating that this matrix represents an infinitesimal transformation which preserves area.A related characterization of the trace applies to linear vector fields. Given a matrix A, define a vector field F on R n by F(x) = A x. The components of this vector field are linear functions (given by the rows of A). The divergence div F is a constant function, whose value is equal to tr(A). By the divergence theorem, one can interpret this in terms of flows: if F(x) represents the velocity of a fluid at the location x, and U is a region in R n, the net flow of the fluid out of U is given by tr(A)· vol(U), where vol(U) is the volume of U.The trace is a linear operator, hence its derivative is constant:ApplicationsThe trace is used to define characters of group representations. Two representations of agroup are equivalent (up to change of basis on ) if for all .The trace also plays a central role in the distribution of quadratic forms.Lie algebraThe trace is a map of Lie algebras from the Lie algebra glof operators on a n-dimensional space (nmatrices) to the Lie algebra k of scalars; as k is abelian (the Lie bracket vanishes), the fact that this is a mapof Lie algebras is exactly the statement that the trace of a bracket vanishes:The kernel of this map, a matrix whose trace is zero, is said to be traceless or tracefree, and these matrices form the simple Lie algebra sl, which is the Lie algebra of the special linear group of matrices with determinant 1. Thenspecial linear group consists of the matrices which do not change volume, while the special linear algebra is the matrices which infinitesimally do not change volume.In fact, there is a internal direct sum decomposition of operators/matrices into traceless operators/matrices and scalars operators/matrices. The projection map onto scalar operators can be expressed in terms of the trace, concretely as:Formally, one can compose the trace (the counit map) with the unit map of "inclusion of scalars" toobtain a map mapping onto scalars, and multiplying by n. Dividing by n makes this a projection, yielding the formula above.In terms of short exact sequences, one haswhich is analogous tofor Lie groups. However, the trace splits naturally (via times scalars) so but the splitting of the determinant would be as the n th root times scalars, and this does not in general define a function, so the determinant does not split and the general linear group does not decompose:Bilinear formsThe bilinear formis called the Killing form, which is used for the classification of Lie algebras.The trace defines a bilinear form:(x, y square matrices).The form is symmetric, non-degenerate[5] and associative in the sense that:In a simple Lie algebra (e.g., ), every such bilinear form is proportional to each other; in particular, to the Killing form.Two matrices x and y are said to be trace orthogonal ifInner productFor an m-by-n matrix A with complex (or real) entries and * being the conjugate transpose, we havewith equality if and only if A = 0. The assignmentyields an inner product on the space of all complex (or real) m-by-n matrices.The norm induced by the above inner product is called the Frobenius norm. Indeed it is simply the Euclidean norm if the matrix is considered as a vector of length mn.GeneralizationThe concept of trace of a matrix is generalised to the trace class of compact operators on Hilbert spaces, and the analog of the Frobenius norm is called the Hilbert-Schmidt norm.The partial trace is another generalization of the trace that is operator-valued.If A is a general associative algebra over a field k, then a trace on A is often defined to be any map tr: A→ k which vanishes on commutators: tr([a, b]) = 0 for all a, b in A. Such a trace is not uniquely defined; it can always at least be modified by multiplication by a nonzero scalar.A supertrace is the generalization of a trace to the setting of superalgebras.The operation of tensor contraction generalizes the trace to arbitrary tensors.Coordinate-free definitionWe can identify the space of linear operators on a vector space V with the space , where . We also have a canonical bilinear function that consists of applying an element of to an element of to get an element of in symbolsThis induces a linear function on the tensor product (by its universal property) which, as it turns out, when that tensor product is viewed as the space of operators, is equal to the trace.This also clarifies why and why as composition of operators (multiplication of matrices) and trace can be interpreted as the same pairing. Viewing one may interpret the composition map ascoming from the pairing on the middle terms. Taking the trace of the product then comes from pairing on the outer terms, while taking the product in the opposite order and then taking the trace just switches which pairing is applied first. On the other hand, taking the trace of A and the trace of B corresponds to applying the pairing on the left terms and on the right terms (rather than on inner and outer), and is thus different.In coordinates, this corresponds to indexes: multiplication is given by soand which is the same, whilewhich is different.For V finite-dimensional, with basis and dual basis , then is the entry of the matrix of the operator with respect to that basis. Any operator is therefore a sum of the form . Withdefined as above, . The latter, however, is just the Kronecker delta, being 1 if i=j and 0 otherwise. This shows that is simply the sum of the coefficients along the diagonal. This method, however, makes coordinate invariance an immediate consequence of the definition.DualFurther, one may dualize this map, obtaining a map This map is precisely the inclusion of scalars, sending to the identity matrix: "trace is dual to scalars". In the language of bialgebras,scalars are the unit, while trace is the counit.One can then compose these, which yields multiplication by n, as the trace of the identity is the dimension of the vector space.Notes[1]Can be proven with the Cauchy-Schwarz inequality.[2]This is immediate from the definition of matrix multiplication.[3]Proof:if and only if and (with the standard basis ),and thus.More abstractly, this corresponds to the decomposition as tr(AB)=tr(BA) (equivalently,, which has complement the scalar matrices, and leaves one degree of ) defines the trace on slnfreedom: any such map is determined by its value on scalars, which is one scalar parameter and hence all are multiple of the trace, a non-zero such map.[4]Proof: is a semisimple Lie algebra and thus every element in it is the commutator of some pair of elements, otherwise the derivedalgebra would be a proper ideal.[5]This follows from the fact that if and only ifArticle Sources and Contributors7 Article Sources and ContributorsTrace (linear algebra) Source: /w/index.php?oldid=402560193 Contributors: Achab, Adiel, Aetheling, Algebraist, Archelon, AxelBoldt, BenFrantzDale, Berland,Btyner, CYD, Calc rulz, CattleGirl, Charles Matthews, Chochopk, Dima373, Dysprosia, Edinborgarstefan, Egriffin, Email4mobile, Eranb, Eric Olson, Fropuff, Ged.R, Giftlite, Haseldon,JabberWok, Japanese Searobin, Jewbacca, Jshadias, Kaarebrandt, Kan8eDie, Katzmik, Keyi, Kruusamägi, Ksyrie, Laurentius, Lethe, Lukpank, MER-C, MarSch, Mct mht, Melchoir, MichaelHardy, Mon4, Nathanielvirgo, Nbarth, Nineteen O'Clock, Octahedron80, Oleg Alexandrov, Oyz, Phe, Phys, Pokipsy76, Pt, Robinh, Salgueiro, Saretakis, Sciyoshi, Spiel496, Spireguy,StradivariusTV, Sullivan.t.j, TakuyaMurata, Tarquin, Tercer, Tffff, Thehotelambush, Tsirel, V1adis1av, Whaa?, WhiteHatLurker, Wpoely86, Wshun, 84 anonymous edits LicenseCreative Commons Attribution-Share Alike 3.0 Unported/licenses/by-sa/3.0/。

一、简介Modelica是一种面向物理建模和工程仿真的开放式建模语言,它的简单算法(simple algorithm)是其中的一种常用算法。

本文将介绍simple算法的基本原理、应用场景和优缺点。

二、简单算法的基本原理简单算法是一种基本的隐式数值积分方法,它通过迭代求解微分方程的数值解。

简单算法的基本原理如下:1. 对微分方程进行离散化处理,将微分方程转化为差分方程;2. 利用初始条件,采用迭代方法求解差分方程的数值解;3. 判断数值解的精度是否满足要求,如果不满足则继续迭代,直到满足要求为止。

简单算法的求解过程相对直观,易于理解和实现,因此在一些工程仿真软件中被广泛应用。

三、简单算法的应用场景简单算法适用于一些简单的动态系统仿真,特别是对于非刚性系统和非线性系统的仿真。

由于简单算法的迭代过程较为稳定,因此对于一些求解较为复杂的微分方程而言,简单算法可以提供较为可靠的数值解。

简单算法在电力系统、控制系统和热力系统等领域有着广泛的应用。

在这些系统中,通常涉及到复杂的微分方程,而简单算法可以提供较为准确的数值解,为工程设计和分析提供重要的支持。

四、简单算法的优缺点简单算法作为一种常用的数值积分方法,具有以下优缺点:1. 优点:(1)易于实现:简单算法的迭代过程相对简单,易于理解和实现;(2)稳定性较好:简单算法的迭代过程相对稳定,适用于一些复杂的微分方程的求解。

2. 缺点:(1)收敛速度较慢:简单算法的迭代过程需要较多的迭代次数,收敛速度较慢;(2)对刚性系统和高阶系统的适应性较差:简单算法在处理一些刚性系统和高阶系统时,可能会出现数值不稳定的情况。

简单算法作为一种常用的数值积分方法,适用于一些简单的动态系统仿真,具有易于实现、稳定性较好的特点,但在收敛速度和对复杂系统的适应性上存在一定的局限性。

五、结语简单算法作为Modelica建模语言的一种常用算法,在工程仿真和系统分析中有着重要的应用价值。

通过深入理解简单算法的基本原理和应用场景,可以更好地利用该算法进行系统建模和仿真,为工程设计和分析提供可靠的数值支持。

(0,2) 插值||(0,2) interpolation0#||zero-sharp; 读作零井或零开。

0+||zero-dagger; 读作零正。

1-因子||1-factor3-流形||3-manifold; 又称“三维流形”。

AIC准则||AIC criterion, Akaike information criterionAp 权||Ap-weightA稳定性||A-stability, absolute stabilityA最优设计||A-optimal designBCH 码||BCH code, Bose-Chaudhuri-Hocquenghem codeBIC准则||BIC criterion, Bayesian modification of the AICBMOA函数||analytic function of bounded mean oscillation; 全称“有界平均振动解析函数”。

BMO鞅||BMO martingaleBSD猜想||Birch and Swinnerton-Dyer conjecture; 全称“伯奇与斯温纳顿-戴尔猜想”。

B样条||B-splineC*代数||C*-algebra; 读作“C星代数”。

C0 类函数||function of class C0; 又称“连续函数类”。

CA T准则||CAT criterion, criterion for autoregressiveCM域||CM fieldCN 群||CN-groupCW 复形的同调||homology of CW complexCW复形||CW complexCW复形的同伦群||homotopy group of CW complexesCW剖分||CW decompositionCn 类函数||function of class Cn; 又称“n次连续可微函数类”。

Cp统计量||Cp-statisticC。

Galois上同调是有限群上同调理论的推广,与二次型理论,中心单代数理论、代数群等数学分支都有着广泛的联系,下面我就来简单介绍一下它的基本理论及其应用概况。

先从投射有限群(profinite group)讲起,它实际上就是有限群的投射极限,等价于紧完全不连通的拓扑群。

对于交换投射有限群,可以通过Poincare对偶Hom(-,Q/Z)对应于挠Abel群。

为什么要考虑投射有限群呢?那是因为Galois群都是投射有限群。

具体来说,假若Ω/k 是Galois扩张,那么其Galois群同构于Ω/k的所有有限子扩张L/k的Galois群的投射极限,这里的同构是建立在拓扑群的意义上的。

实际上,我们已经有了抽象群的上同调,那么投射有限群与它有什么差别呢?主要就是加上了拓扑概念,对应的G-模A是要求连续,因此有时也被称为连续上同调。

接下来来我们自然可以问,是否存在一个投射有限群,它的上同调与它作为抽象群的上同调是不同的?这对于有限群是成立的,这是因为有限群的拓扑是离散的;对于零阶上同调群,两者也是相同的,它们都等于群作用的不动点集。

但假若我们取Z^为Z生成的投射有限群,它平凡的作用在Q上,那么对于连续上同调H^1(Z^,Q)=lim H^1(Z/n,Q)=0,但对于一般上同调H^1(Z,Q)=Hom(Z^,Q)≠0,这是因为由于Q的可除性,我们可以扩张Hom(Z,Q)的非零元。

投射有限群的上同调的代数构造与抽象群的上同调完全类似,这里我就不再重复了。

下面看相应的上同调序列,假若我们已经有投射有限群G-模的短正合列1→A→B→C→1,我们可以期盼这样的长正合列:1→H^0(G,A)→H^0(G,B)→H^0(G,C)→H^1(G,A)→H^1(G,B)→H^1(G,C)→H^2(G,A)→…其具体结论是逐步递进的:1)A是B的普通子群时,序列可以连到H^1(G,B)2)A是B的正规子群时,序列可以连到H^1(G,C)3)A是B的中心子群时,序列可以连到H^2(G,A)同时有两个连通同态也很值得注意:记上述正合列中f:A→B,1)δ_0:H^0(G,C)→H^1(G,A). 对任何c∈C^G,有拉回元素b∈B^G,定义δ_0(c)=[α},使得f(α_σ)=b^(-1)σ·b.2)δ_1:H^1(G,C)→H^2(G,A). 对任何[γ]∈H^1(G,C),各γ_σ均有拉回元素β_σ,定义δ_1([γ])=[α},使得f(α_σ,τ)=β_σ(σ·β_τ)(β_σ,τ)^(-1).这样的符号看似比较杂乱,但实际上就是群元素σ作用后带来的“交换障碍”,同时一阶连续上同调H^1(G,A)还可以被解释为A上的G-挠子(torsor)或主齐性空间,即带与G-作用一致的单可迁右作用的G-集。

湘潭大学硕士学位论文形式三角矩阵环的自同构姓名:***申请学位级别:硕士专业:基础数学指导教师:***20030501摘要设A,B是两个有单位元的环,并且他们都只有平凡的幂等元,M为非零的口,回一双模。

记Tri∽,M,口)为所有以A,口中元为对角元,M中元为上三角元的2阶三角矩阵构成的环。

本文的研究是在Wai-shunCheung([16]),A.HaghangandK.Varadardjan([1]),You’gnCao([5])andLepingXie.Etc([18])等的研究基础上进行的,主要结论是:形式三角矩阵环(,一Tri(4,M,B)的映射妒是一个自同构的充分必要条件是存在环A的自同构g,环B的自同构h,似,口)一双模M的一个乜,.11).半线性同构七,M中的一个元素d,使妒(4州如h㈨一≯坳’)o关键词:环,双模,自同构,半线性同构AbstractThroughoutthispaper,weassumethatAandBareringswithidentitiesandtheonlyidempotentsinAandBare1and0.LetMisanonzeroleftArightBbimodule.Thefomattriangu-armatriX订ngTrt。

,M,动2(彳答)hasasitselementsformatmatrices(4:)wherea∈A,bCBanam…Weundertakeafurtherinvestigationofauto-morphismsonuppertriangularmatricesstudiedbyWai—shunCheung([16])A.HaghangandK.Varadardjan([1]),You’anCao([5])andLepingXie.etc([18]).Themainresultofthispaperisasfollows:assumeA,BandMaredefinedabove,themap∞onTri似,M,B)isanautomorphismifandonlyifthereareanautomorphismgofA,anautomorphismhofB,a(g,h)一semilinearautomorphismkofMandanelementdofMsuchthat妒㈡2P如卜≯坳’)-Keywords:ring,bimodule,automorphism,semiIinearautomorphism形式三角矩阵环的自同构引言代数和环上的映射一直是基础数学的一个非常重要的研究部分,矩阵代数和矩阵环上的自同构和Lie自同构的研究就是其中之一,人们在这一方面做了大量的研究。