Near-optimal conversion of hardness into pseudo-randomness

- 格式:pdf

- 大小:185.30 KB

- 文档页数:10

Module 7-video 12What are particle-reinforced composites?什么是颗粒增强复合材料?Hello!Welcome to Introduction to Materials. Today, we are going to talk about particle-reinforced composites, also called particle or particulate composites.译文:大家好!欢迎走进《材料导论》课堂。

今天,我们来一起学习颗粒增强复合材料。

Particle composites containing reinforcing particles of one or more materials suspended in a matrix of a different materials. As with nearly all materials, structure determines properties, and so it is with particle composites.This Figure illustrates the geometrical and spatial characteristics of particles, such as the concentration, size, shape,distribution and orientation. They all contribute to the properties of these materials. 颗粒增强复合材料由基体和分散相构成,分散相粒子的几何和空间特性,如含量、大小、形状、分布、取向等结构因素都会影响颗粒复合材料的性能。

译文:颗粒增强复合材料是由一种或多种增强颗粒分散于另一种基体材料中构成的复合材料。

颗粒增强复合材料与其它几乎所有材料一样,其结构决定着性能。

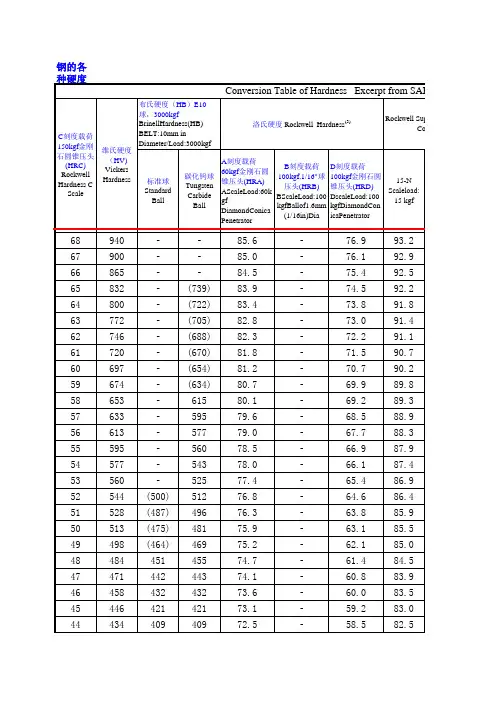

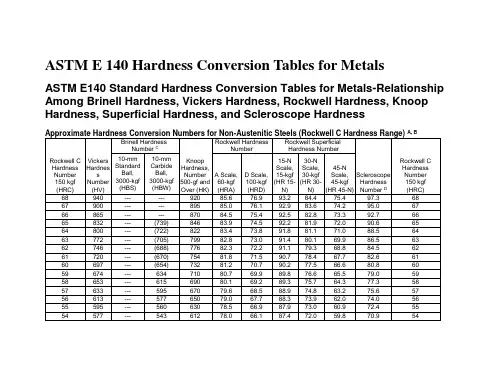

不锈钢的硬度检测方法及相关标准Stainless steel hardness testing methods and related standards (GB, USA, Japan)Stainless steel products can be classified into stainless steel plate, stainless steel band, stainless steel tube, stainless steel bar and stainless steel wire according to the delivery form. In accordance with the classification of metallographic structure, they can be divided into the following five types: austenitic stainless steel, ferritic stainless steel, austenitic ferritic stainless steel, martensitic stainless steel and precipitation hardening stainless steel. All kinds of stainless steel materials are annealed, Quenched and tempered, solid solution, quenching or tempering and other different heat treatment state supply.Hardness test is a hard pressed head in accordance with the conditions of slow pressure into the surface of the sample, and then test the indentation depth or size, in order to determine the size of the material hardness. Hardness test is the simplest, fastest and easiest way to test mechanical properties of materials. Hardness tests are nondestructive, and there is an approximate conversion relationship between hardness and tensile strength.Since tensile testing is not easy to test and is easily converted from hardness to strength, people are increasingly testing only the hardness of the material and less testing its strength. Especially due to the continuous progress of hardness of manufacturing technology and innovation, some of the original can not directly test the hardness of the material,such as stainless steel plate, stainless steel pipe, stainless steel wire, stainless steel strip and thin, are now possible direct test of hardness. Therefore, there is a tendency that hardness tests will gradually take place of tensile tests.In the stainless steel standard, generally stipulated cloth, Luo, Wei three kinds of hardness test methods, determination of Hb, HRB (or HRC) and HV hardness value, stipulate three kinds of hardness value, only test one can stainless steel hardness testing methodThe metal material standards in the United States, a hardness test, a prominent feature is the priority of the Rockwell hardness test, complemented by Brinell hardness test, hardness test is rarely used for Vivtorinox, the United States believes that Vivtorinox should be mainly used for metal hardness testing of thin and small parts test.The standard of China and Japan is three kinds of hardness test at the same time, users can according to the thickness and condition of materials and their own conditions, choose one of them to test stainless steel materials.The standards for tensile test and hardness test in the Japanese stainless steel standard are the same as those of the corresponding standard form in China. The values are similar.In stainless steel hardness Rockwell hardness tester is a preferred instrument, it has the advantages of simple equipment, easy operation, without professional inspectors can directly read the hardness, high testing efficiency, very suitable forfactory use.On the use of Rockwell hardness tester for stainless steel hardness test, in the stainless steel standard, generally only specified HRC and HRB two rulers. For the annealing of stainless steel material, generally corresponding to each grade stainless steel provides hardness value should be less than a certain HRB value, generally in the range of 8896hrb. For quenching and tempering martensitic stainless steel, generally corresponds to each grade of stainless steel varieties, specified hardness value is not less than a certain HRC value, generally in the range of 32 - 46hrc.In stainless steel standards, only Rockwell hardness testers, HRB and HRC scales are specified.In fact, the surface Rockwell hardness tester can also be applied to the detection of stainless steel. Because its principle is exactly the same as Rockwell's hardness tester, but it has less testing power. And its hardness value can be easily converted into HRB, HRC or Brinell hardness Hb, Vivtorinox hardness hv. The corresponding conversion table can be found on our company's website. These conversion tables are derived from American Standard ASTM or international standard iso. For thin wall stainless steel tubes, thin stainless steel sheets, thin stainless steel strips, fine stainless steel wires, etc., it is very convenient to use surface Rockwell hardness tester. Especially the portable surface Rockwell hardness of the company's newly developed meter pipe, Rockwell hardness tester, stainless steel plate, can thin 0.05mm stainless steel band and fine stainless steel tube to 4.8mm fast and accuratehardness testing, made in China is difficult to solve the problem smoothly done or easily solved.Hardness test of stainless steel plate and stainless steel bandStainless steel plates include hot rolled plates and cold rolled sheets. Hardness test of stainless steel plate or stainless steel band greater than 1.2mm adopts Rockwell hardness tester to test the hardness of HRB and hrc. The stainless steel plate or stainless steel strip with a thickness of 0.2 ~ 1.2mm is tested with a surface Rockwell hardness tester, HRT or hrn. A stainless steel plate or stainless steel band of less than 0.2mm thickness. A surface Rockwell hardness tester is used to test the hr30tm hardness with a diamond anvil seat.For the thickness of 0.3 ~ 13mm annealing stainless steel plate, stainless steel band, you can also use webster hardness tester, this instrument test is very fast and simple, very suitable for annealing stainless steel material rapid conformity inspection.Hardness test of stainless steel tubeStainless steel pipe includes welding stainless steel tube and cold drawn stainless steel pipe. Stainless steel pipe with internal diameter greater than 30mm and wall thickness greater than 1.2mm, Rockwell hardness tester is used to test the hardness of HRB and hrc. Stainless steel pipe with an inner diameter greater than 30mm and a wall thickness of less than 1.2mm, and a surface Rockwell hardness tester is used to test the hardness of HRT or hrn. Stainless steel pipe with a diameterof less than 30mm and greater than 4.8mm is tested with a Rockwell hardness tester specially made of tubes. The hardness of hr15t is measured. When the inner diameter of the pipe is greater than 26mm, the hardness of the inner wall of the pipe can also be measured by Rockwell or surface Rockwell hardness tester.For more than 6.0mm in diameter, wall thickness in the annealed stainless steel pipe can be used under 13mm, W - B75 webster hardness test, it is very fast and simple, suitable for rapid and nondestructive inspection of stainless steel pipe.Hardness test of stainless steel barsFor stainless steel bars with a diameter of less than 50, Rockwell hardness tester can be used to test the hardness of HRB or hrc.Hardness test of stainless steel wireFor stainless steel wire with diameter greater than 2.0mm, the surface Rockwell hardness tester can be used to test the hardness of HRT or hrn.。

75%-Efficiency blue generation from an intracavityPPKTP frequency doublerR.Le Targat,J.-J.Zondy,P.Lemonde*BNM-SYRTE,Observatoire de Paris 61,Avenue de l Õobservatoire,75014Paris,France Received 23July 2004;received in revised form 8November 2004;accepted 22November 2004AbstractWe report on a high-efficiency 461nm blue light conversion from an external cavity-enhanced second-harmonic gen-eration of a 922nm diode laser with a quasi-phase-matched KTP crystal (PPKTP).By choosing a long crystal (L C =20mm)and twice looser focusing (w 0=43l m)than the ‘‘optimal’’one,thermal lensing effects due to the blue power absorption are minimized while still maintaining near-optimal conversion efficiency.A stable blue power of 234mW with a net conversion efficiency of g =75%at an input mode-matched power of 310mW is obtained.The intra-cavity measurements of the conversion efficiency and temperature tuning bandwidth yield an accurate value d 33(461nm)=15(±5%)pm/V for KTP and provide a stringent validation of some recently published linear and thermo-optic dispersion data of KTP.Ó2004Elsevier B.V.All rights reserved.PACS:42.65.Ky;42.79.Nv;42.70.MpKeywords:Second harmonic generation;PPKTP;Strontium;Thermal effects1.IntroductionContinuous-wave (CW)high-power blue light generation is a key issue for many applications such as laser printing,RGB color display or for spectroscopy and cooling of atomic species.Due to the limited power and tunability of gas lasers(Ar +,HeCd)or newly developed blue diode laser sources in the blue-UV spectrum [1],the usual pro-cedure is to upconvert near-IR solid-state or semi-conductor diode lasers either internal to the laser resonator [2]or in external enhancement resona-tors [3,4].In the latter scheme,the efficiency of the upconversion is usually measured in terms of the ratio g ¼P 2x =P in x of the generated second-harmonic (SH)power to the fundamental field (FF)power which is mode-matched to the resona-tor.Several nonlinear materials can upconvert0030-4018/$-see front matter Ó2004Elsevier B.V.All rights reserved.doi:10.1016/j.optcom.2004.11.081*Corresponding author.Tel.:+33140512224;fax:+33143255542.E-mail address:pierre.lemonde@obspm.fr (P.Lemonde).Optics Communications 247(2005)471–481/locate/optcomsuch lasers,using either temperature-tuned or angular birefringence phase-matching.The most widely used one is the large nonlinearity(d eff$18 pm/V)potassium niobate(KNbO3)crystal[5–9]. For temperature-tuned noncritical phase-match-ing,the major drawback of KNbO3is the occur-rence of a phase transition leading to repoling near T=185°C[5,6],which restricts upconversion to laser wavelengths longer than k$920nm.For laser cooling of the1S0–1P1strontium line at461 nm,which is the target of the present work,the use of a non-critically phase-matched KNbO3at T$150°C is hence not recommended.For short-er FF wavelengths,critical phase-matching at room-temperature is possible but results in a deleterious beam walk-off(q$1°)of the blue wave–leading to elliptical beam shape or even higher-order transverse patterns[9]–combined with a narrow temperature bandwidth(D T$0.5°C)[8].As an additional drawback,KNbO3is sub-ject to blue-induced photo-chromic damage known as BLIIRA(blue-induced infrared absorp-tion[10]).This nonlinear loss mechanism,together with the associated thermal lensing,has limited the highest reported conversion efficiency to g$80%, yielding500mW of blue power at473nm from aNd:YAG laser power P inx $800mW[8].Alternative widely used materials are LiB3O5 (LBO)or b-BaB2O4(BBO)[11–13]but the low nonlinear coefficients of oxoborate crystals (d eff61pm/V)are not suited to the frequency con-version of low power sources because it requires a tight control of the round-trip intracavity loss down to61%.Recently,we employed a critically phase-matched KTP in a doubly resonant sum-fre-quency generation(SFG)of a Nd:YAG laser and a low-power AlGaAs diode laser at813nm to pro-duce120mW of461nm light,but the conversion efficiency(g<30%)was limited by the strong power imbalance of the two pump sources[14]. To allow a more efficient cooling of atomic stron-tium,a new powerful and more convenient blue source from direct second-harmonic generation (SHG)of a master-oscillator-power-amplifier(Al-GaAs-MOPA)delivering an output power of 450mW was then constructed.But unlike in the experiment in[9]that uses a critically phase-matched KNbO3semi-monolithic resonator to generate the same wavelength,we made our choice on periodically-poled potassium titanyl phos-phate(PPKTP).Electric-field poled quasi-phase-matched(QPM)oxide ferroelectrics such as PPLN (periodically-poled lithium niobate)and PPKTP have recently super-seeded the previous birefrin-gence phase-matched materials for visible light generation[15–17]owing to their much higher effective nonlinearities(d eff(PPLN)$17–18pm/V and d eff(PPKTP)$7–9pm/V).Furthermore QPM materials are intrinsically free of walkoff. For blue generation,PPKTP is preferred to PPLN which exhibits strong photorefractive damage when used at room-temperature.In[15],an in-tra-cavity PPKTP frequency-doubled Nd:YAG la-ser yielded an output power of500mW at473nm with an internal efficiency of5.5%.Green light power of268mW was generated by Juwiler et al.[16]from a CW Nd:YAG laser with an efficiency of70%in a standing-wave resonator.In[17]a MOPA diode laser(0.5W)similar to ours gener-ated200mW of blue light at461nm in a ring enhancement cavity,a value comparable to that obtained using a similar semi-conductor laser,at identical wavelength,in a semi-monolithic stand-ing-wave KNbO3resonator[9].However,due to the lower UV bandgap energy of KTP,linear absorption becomes an issue at wavelengths shorter than500nm.A detailed absorption measurement offlux-grown or hydro-thermally-grown KTP in the spectral range from the bandgap wavelength(365nm)to600nm re-vealed a largefluctuation from sample to sample [18].At473nm for instance,values of a ranging from0.034to0.085cmÀ1were reported.In a re-cent closely related PPKTP–SHG experiment pumped at846nm,a value a(423nm)=0.10 cmÀ1has been measured[19].Strong thermal lens-ing effects[20]arising from the blue absorption was the main limitation of their power efficiency scaling in genuine CW operation(g=60%,corre-sponding to225mW of423nm power for375 mW of mode-matched Ti:sapphire laser).Higher blue power(400mW)could be obtained for the same circulating power of P c=5.5W only in pulsed fringe-scanning mode that allows more effi-cient heat dissipation within the PPKTP crystal. Such a severe limitation in CW operation actually472R.Le Targat et al./Optics Communications247(2005)471–481arised from the tight focusing used(w0=17l m, corresponding to the theoretical optimum of the single-pass efficiency for the PPKTP crystal length L C=10mm[23,24])and the associated thermallens power which scales as wÀ20[20].In true CWoperation,the thermal focal length(which can be as short as a few centimeter,see Section6)experi-enced by the circulating fundamental power im-pedes efficient mode-and-impedance matching of the input beam.For a symmetric linear resonator for instance,thermal lensing has been shown to be responsible for the clamping of the circulatingpower to a low critical value P critc correspondingto the collapse of the secondary thermally-induced waists[20].In the present experiment,we deliberately avoid optimal single-pass focusing to circumvent these thermal lensing effects.In Section2we show that owing to the large nonlinearity of PPKTP,one does not require extremely low intra-cavity linear losses to maintain the conversion efficiency con-stant over a wide range of focusing parameters. The latter is defined by L=L C/z R,where L C isthe PPKTP length and z R¼k x w20=2is the Ray-leigh range of the cavity mode.Wefind that loose focusing to w0=40l m in a20-mm long PPKTP crystal still results in a large single-pass efficiencyC¼P2x=P2c $2:3Â10À2WÀ1and in a stableCW operation of the resonant cavity at the maxi-mum available mode-matched power ofP in x ¼310mW with no evidence of serious lensingeffing such a strategy,blue power scaling to half a Watt should be possible with$0.7W of mode-matched input power.In Section3we briefly describe the experimental setup,highlighting some measurement procedures aimed at an accurate determination of important parameters such as the mode-matching factor j or the circulating power P c.Section4is devoted to the intracavity measurement of the conversion efficiency that determines thefinal enhancement efficiency,taking profit of the TEM00resonator modefiltering of the fundamental MOPA laser non-Gaussian beam and making use of the accu-rate evaluation of the Gaussian beam SHG focus-ing function h[24].From these measurements,we derive a consistent value of the d33nonlinear tensor element of KTP.The comparison of the recorded temperature tuning curve with the func-tional dependence given by two of the most re-cently published linear and thermo-optic dispersion relations of KTP[25,26]shows a perfect agreement between theory and experiment,provid-ing thus a stringent validation test of those disper-sion relations for PPKTP in the blue/near-IR spectrum.Having determined all the relevant parameters,we then present(Section5)the reso-nant enhancement results which are in good agree-ment with the theoretical expectation,with a record efficiency of g=75%.The paper ends with a brief thermal effects analysis(Section6)which supports that the experiment is indeed not limited by thermal effects.2.Analysis of singly resonant SHG efficiency versus focusingWe start by investigating the dependence of the power conversion efficiency on the focusing parameter L.At zero cavity detuning,the internal circulating FF power P c in a singly-resonant ring resonator is given by[3,27]P cP inx¼T11Àffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffið1ÀT1Þð1À Þð1ÀC P cÞpÂÃ2,ð1Þwhere T1=1ÀR1is the transmission factor of the input coupler, is the distributed round-trip passive fractional loss(excluding T1).C,ex-pressed in WÀ1,is the depletion due to non lin-ear effects.It can be written as the sum of two termsC¼C effþC abs,ð2Þwhere C effis the conversion efficiency,P2x¼C eff P2c and C abs is the efficiency of the Second Harmonic absorption process,which cannot be neglected here,P abs¼C abs P2c.The net power conversion efficiency g calculated from(1)obeys the implicit equation[7]ffiffiffig p2Àffiffiffiffiffiffiffiffiffiffiffiffiffiffi1ÀT1p2À ÀCffiffiffiffiffiffiffiffiffig P inxC effs@1A24352À4T1ffiffiffiffiffiffiffiffiffiffiffiffiffiC eff P inxq¼0:ð3ÞR.Le Targat et al./Optics Communications247(2005)471–481473Given C,which depends on the focusing and the crystal length,and given and the maximum avail-able mode-matched P inx ,g in Eq.(1)can be opti-mized against T1to yieldT opt 1¼2þffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffiffi22þC P inxr:ð4ÞThe conversion efficiency C effcan be evaluated using the undepleted pump SHG theory taking lin-ear absorption into account[23,24].For a waist location at the centre of the crystal it can be writ-ten as[24,28]C eff¼P2x Pc¼2x2d2effp 0c3n2xn2xL C k x expðÀa2x L CÞhða,L,rÞ,ð5Þhða,L,rÞ¼12LZþL=2ÀL=2Zd s d s0Âexp½Àaðsþs0þLÞÀi rðsÀs0Þð1þi sÞð1Ài s0Þ:ð6ÞIn Eqs.(5)and(6),k x=2p n x/k x is the FF wave-vector internal to the medium,a n x(n=1,2)are the linear absorption coefficients,a=(a xÀa2x/2)z R, L=L C/z R is the focusing parameter(which differs by a factor2from the definition given by Boyd and Kleinman[23])and r=D kÆz R is the normal-ized wavevector mismatch given byD kðTÞ¼k2xðTÞÀ2k xðTÞÀ2p=KðTÞ:ð7ÞK(T)is the PPKTP grating period whose temper-ature dependence can be calculated with pub-lished thermal expansion coefficients of KTP [22,29].We can note that,when diffraction is con-sidered,the value of D k that optimizes the focus-ing function h versus r is not nil as for a plane wave[24].The Second Harmonic absorption efficiency C abs is more difficult to express,except in two limits:for a plane wave,one can easily deduce the pro-file P2x(z,a2x)for06z6L C from Eqs.(5)and(6),and thus evaluate the absorbed power:P abs¼a2xZ L CP2xðz,a2xÞd zð8Þ when the beam is tightly focused,the conver-sion occurs only at the center of the crystaland then:P abs¼e a2x L C=2À1ÀÁC eff P2c,ð9ÞWith our crystal we measured a2x=0.14cmÀ1 which leads to C abs/C eff=0.1(resp.0.13)in the plane wave(resp.tight focusing)limit.Gi-ven the small difference between both values and since we are mainly interested in focusing below optimal,we take for the whole analysis the plane wave value.1The other parameters of the model are also ta-ken from experimental measurements(see below). We have =0.02lumping the FF crystal linear loss(absorption+AR coating)and mirror reflec-tion loss,a crystal length of L C=20mm.Our measured value for the polar tensor element of KTP d33=15pm/V is slightly smaller than[30] and yields d eff=9.5pm/V with the refractive indi-ces n x”n Z(922nm)=1.8364,n2x”n Z(461 nm)=1.9188at T=30°C[26].The mode-matched power is P inx¼310mW.Fig.1displays the efficiency curve gðT opt1,LÞfor a beam waist range18l m6w06100l m.It cor-responds to perfect impedance matching(T1¼T opt1,see Eq.(4))for each C(L).It is clearly seen that g is practically constant over the range 20l m6w0650l m,meaning that it is not neces-sary to set the cavity waist at the optimal single-pass conversion as commonly believed,in spite of the twofold reduction of C for the loose focus-ing end range.At w opt¼23:6l m,the circulating optimal FF power is P c=2.56W(yielding P2x=236mW)whereas at w0=50l m,P c=3.4 W(P2x=220mW).For these nearly identical blue power,the thermal lens power is4times larger at optimal focusing than at the looser focusing. Hence to avoid thermal effects,a cavity waist be-tween40and50l m would be recommended (L62)with an expected efficiency g>70%.1The predictions of the model will then be slightly too optimistic for large L.474R.Le Targat et al./Optics Communications247(2005)471–481This insensitivity of g as a function of w0is due to the large nonlinear efficiency which dominates round trip passive losses,even for the not so small =0.02chosen here.Experimentally,we indeed found that nearly optimal g was measured over a broad range of cavity waist values,the best trade-offgiving tolerable thermal effects being at w0=43l m.In the same way,the value of T1is not critical.Our input coupler exhibits T1=0.12%,which causes a loss smaller than1% on the generated blue power in comparison to T opt1¼0:10%which would optimize g for w0=43 l m(Eq.(4)).3.Experimental setup and measurement proceduresThe frequency-doubling setup is sketched in Fig.2.A conventional unidirectional ring cavity is chosen.The commercial MOPA pump laser is made of a grating-tuned extended-cavity master diode laser in the Littrow configuration,injecting a tapered semiconductor amplifier(Toptica Pho-tonics AG).After aÀ70dB Faraday isolation stage,the MOPA provides a useful single-longitu-dinal-mode power P x=450mW at k x=922nm, with a short-term linewidth of less than1MHz. The transverse beam shape is far from a funda-mental Gaussian mode and strongly depends on the tapered amplifier injection current.A system of lenses mode-matches the input FF beam to the bow-tie ring resonator larger waist located be-tween the2plane mirrors M1and M2.The folding angle of the ring resonator is set to11°leading to a negligible astigmatism introduced by the two off-axis curved mirrors M3and M4(of radius-of-curvature100mm).The meniscus shape of M4 (M3)do not introduce any additional divergence of the transmitted FF or SH beam.This allows an accurate measurement(to±5%)of the smaller waist w0located at the center of the PPKTP crystal from a z-scan measurement of the TEM00diverg-ing FF beam leaking out from M4.The diverging beam diameter measurement is performed at dif-ferent z-location by use of a rotatingknife-edgeR.Le Targat et al./Optics Communications247(2005)471–481475commercial device,and the cavity waist is retrieved from standard Gaussian optics laws.The pump beam is coupled in through the par-tial reflector M1.Mirrors M2–M3are high-reflec-tors at922nm and M4is dichroically-coated at x, 2x with R x>99.9%and T2x=98%.Side2of all optics are dual-band AR-coated with R60.5%. The dual-band AR-coated PPKTP crystal(Raicol Crystals Ltd.)has dimension2·1·20mm3,with the z-propagation direction corresponding to the X-principal axis and the1mm thickness sides ori-ented along the Z-polar axis.Afirst-order(50%-duty-cycle)QPM periodic grating is patterned along the X-axis,with a period K0.5.5l m for temperature quasi-phase-matching around30°C. The PPKTP chip is mounted in a small copper holder attached to a thermo-electric Peltier ele-ment with which we servo the crystal temperature to better than±10mK.The crystal Z-axis is matched with the direction of the electric-field polarization of the MOPA laser.The blue absorp-tion coefficient of this crystal a2x at461nm was measured from a second blue source,yielding a2x=0.14(±5%)cmÀ1.This value is larger than the one(a2x=0.10cmÀ1)measured at423nm by Goudarzi et al.[19]on a1cm PPKTP sample from a different manufacturer,confirming thus the large dispersion of the values from one sample to another.Finally,because no suitable k/2plate was avail-able in front of the cavity for the input power var-iation at constant MOPA output power,P x is varied with the PA injection current.As men-tioned previously,such a power variation results in substantial transverse mode change and in turn in a variation of the mode-matching factor j.The coefficient j(P x)was hence calibrated in the ab-sence of blue conversion(by tuning the tempera-ture to a zero conversion regime)and used to rescale the mode-matched power.For the range 300<P x<450mW,the mode-matching factor is found practically constant and equal to j$0.7.In the following section,the conversion efficiency C eff(w0)(Eq.(5))will be measured inter-nal to the cavity.For the measurement of the cir-culating FF power P c,the transmissivity of mirror M4at922nm was accurately calibrated to 1.2·10À5±10%.The FF and the SH powers were calibrated with a thermal powermeter with an uncertainty below5%.4.Measurement of PPKTP effective nonlinearity and tuning curveTo model the experimental singly-resonant con-version efficiency,an accurate knowledge of the experimental C eff(w0)is needed.We choose not to use the standard single-pass method for the exper-imental measurement,since the poor beam quality of the MOPA output would contradict the Gauss-ian pump assumption of Eq.(6).By placing the PPKTP inside the resonator,the modefiltering ef-fect of the cavity provides instead a pure TEM00 pump beam with accurately known waists from the measurement method outlined in the previous section.Provided that pump depletion can be neglected,the intracavity power is considered as constant all along the crystal,and is defined as the solution of Eq.(1).Several values of C effwere measured for a range of P c and3waists values w0=56,43,36l m,to yield,respectively,C eff=0.017,0.023,0.028 (±10%)WÀ1.The data points of P2x versus P2c were excellentlyfitted by a linear function,mean-ing that the low depletion assumption holds for all achievable P c s,which is confirmed by an a pos-teriori consistency check of the upper bound value C P c<0.08(1.In Fig.3,the resulting C eff(w0)are plotted against the focusing parameter L along with the theoretical curve(Eq.(5)).The only adjusted parameter to match the experimental points to the curve is the effective nonlinear coefficient d eff=(2/p)d33for afirst-order QPM,which is found to be d eff=9.5(±5%)pm/V. Such a value is slightly higher than those measured elsewhere(5–8pm/V)from OPO threshold measurement or difference frequency generation [25,30],even when wavelength dispersion is ac-counted for.This value yields for KTP d33(461 nm)=15(±5%)pm/V,which is the commonly reported value of this polar v(2)tensor element [30–32]and matches exactly the value(14.8 pm/V)reported by the manufacturer[33].Acc-ounting for Miller dispersion law,this value is also in excellent agreement with the one measured at476R.Le Targat et al./Optics Communications247(2005)471–481541nm on a crystal from the same manufacturer [34].This result shows that the grating quality of our cristal is extremely high.We believe that the perfect match of d effwith its maximum theoretical value stems from the pure Gaussian beam mea-surement and analysis taking diffraction and absorption effects into account,which is not al-ways the case with some of the reported lower val-ues even accounting for grating periodicity defects.We have tried tight focusing close to the opti-mal waist shown in Fig.3without any improve-ment in the singly-resonant conversion efficiency, despite the larger C eff,confirming the prediction of Section2.At w0=36l m,the conversion effi-ciency is identical to the one at43l m.As ex-pected,increasing thermal effects occurred with smaller waists,which could be assessed from a broadened triangular bistable shape of the FF and blue fringes as the cavity length is swept on the contracting length side of the voltage ramp (see e.g.,Fig.10of[19]and the thermal effects analysis in Section6).On this side of the fringe, passive self-stabilization of the optical path length of the resonator tends to maintain the cavity in resonance with the incoming FF frequency.Such an opto-thermal dynamics of thermally-loaded resonators has been analyzed in detail in[20].Fur-thermore,the active locking of the cavity to the top of the distorted fringe–a bistable operating point[20]–becomes problematic as reported in [19].The temperature tuning curve measured inter-nal to the cavity is also shown in Fig.4for thefinal waist choice of w0=43l m,corresponding to L=1.73.The oscillatoryfine structure pattern at the wings is clearly seen.The solid line is the con-version efficiency computed from Eqs.5to7, where the wavevector mismatches D k(T)have been computed with the Sellmeier relations at room-temperature given in[25],the thermo-optic disper-sion relation of[26]and the thermal expansion coefficient a X=6.8·10À6/°C reported in[29].Gi-ven the short grating period,a strikingly excellent agreement is seen with the data points(sidelobe amplitudes and positions)when the h(r)focusing function is used instead of the plane-wave formula. Apart from a lateral temperature shift of the calcu-lated tuning curve in Fig.4,we stress again that no fit was made to get such an agreement,which not only provides a stringent validation of the used dispersion data,at least for PPKTP produced by our manufacturer,but also highlights the excellent quality of thefirst-order periodic grating over the whole PPKTP length.The FWHM temperature tuning bandwidth is D T=1.1°C.R.Le Targat et al./Optics Communications247(2005)471–4814775.Resonant enhancement efficiencyThefinal cavity dimensions yielding the least thermal effects while providing the best power conversion efficiency when the cavity length is ser-vo-controlled correspond to cavity waists (w0,w1)=(43,163l m)given by a M3–M4spacing of$130mm and a total ring cavity round-trip length L cav=569mm.For genuine CW stable operation,an active electronic servo based on an FM-to-AM fringe modulation technique was pre-ferred to the optically phase-sensitive Ha¨nsch–Couillaud method that was reported to fail when thermal effects arise[8,19].The round-trip intracavity fractional loss is measured byfitting,at the QPM temperature,the total losses p= +C P c of the cavity as a function of P c.Otherwise,p is related to the contrast C of the cavity reflection fringes:p¼CP xP c:ð10ÞThis relationship is very reliable since it does not depend on the mode matching coefficient j.The fit yields =0.021(±5%),which is further checked from the value of the cavityfinesse F’40in the absence of nonlinear conversion.This also gives a measurement of C which is consistent with the value quoted above.When the cavity is close to impedance match-ing,the reflection contrast becomes nearly con-stant and is then equal to the mode matchingcoefficient,we measured jðP maxx Þ¼73%.For inter-mediate values of the pump power,the evaluation is more difficult:j can be deduced from the con-trast of reflected fringes at zero conversion,once is known.The generated blue power and net power effi-ciency g are plotted against the mode-matched power in Figs.5and6.The solid lines are com-puted from Eq.(3)using the experimentally mea-sured parameters.Experimental dots match well this curve,meaning that thermal effects are not a problem up to the maximum available laser power. The small offset between experimental points and the theoretical curve from100to250mW being probably due to the less accurate evaluation of the mode matched power in this range.At maxi-mum power,thefinesse drops to F’30due to the nonlinear loss(see inset of Fig.5which shows the reflected FF fringe with a contrast of$73%). The conversion efficiency is independent on whether the measurement is made under pulsed scanning-mode or under cavity-locked operation. In the latter case however,a slight adjustment of the PPKTP temperature has to be performedto478R.Le Targat et al./Optics Communications247(2005)471–481cancel the temperature-induced phase-mismatch under CW operation(Section6).At the maximummode-matched input power P inx ¼310mW(P c=3.2W is the corresponding circulating power),one obtains P2x=234mW corresponding to g=75%.If one takes into account the infra-red laser power which is not the TEM00mode,the glo-bal doubling efficiency of the system is52%.6.Blue-induced thermal effects analysisThe inset of Fig.6shows a slight fringe asym-metry observed on both the FF and SH fringes, reminiscent of the onset of thermal bistability. On the contracting cavity length scan(solid line), the fringe is broadened because the opto-thermal dynamics on this side is characterized by a self-stabilizing effect of the optical path to the laser frequency whereas on the expanding length side (dotted curve)the feedback is positive[20,21].In the PPKTP experiment of[19],the thermal effects were so prominent that the fringe shape broadens over several cold cavity linewidths to acquire a tri-angular shape.In our case,the broadening is com-paratively very modest.It is expected that heating is due to both the residual FF absorption and the SH absorption.To quantify further the role of residual thermal effects,we use the radial heat dif-fusion model detailed in[20],assuming an equiva-lent crystal rod radius equal to the half-thickness of the PPKTP(r0=0.5mm).The temperature rise due to the FF absorption can then be written as D T TÀT0¼D T0À1q r2,where T0is the nomi-nal phase-matching temperature in the absence of heating and r is the radial coordinate.The uni-form temperature shift D T0expresses asD T0¼a x P c4p K C0:57þln2r2w!kP c:ð11ÞThe quantity a x P c denotes the absorbed power per unit length.The quadratic term coefficient in D T,responsible for lensing,is q¼a x P c=ðp K C w20Þwitha corresponding thermal lens powerp¼1th ¼P absxd n x=d TC,ð12Þwhere P absx¼a x L C P c is the total FF absorbedpower(P absx¼19:5mW at P c=3.25W fora x=0.3%cmÀ1).The quantity in parenthesis,f¼d n x=d TC,is the thermalfigure-of-merit of KTPwith K C=3.3W/(m°C)[22]and d n x/d T=1.53·10À5KÀ1[26].The normalized FF fringelineshape under adiabatic length scan y(d)=P c(d)/P cm,where P c(d=0)is the maximum intra-cavity power given by Eq.(1),is described byyðdÞ¼11þdÀD yðdÞ½,ð13Þwhere d=(m LÀm cav)/c is the normalized cavitydetuning,c being the cold fringe half-linewidth:c¼c=½L cavþL Cðn xÀ1Þ =2F¼8:5MHz.In Eq.(13),the Airy function has been approx-imated by a Lorentzian in the vicinity of the reso-nance.For D¼0,the cavity resonance is seen tomove adiabatically as the crystal is thermallyloaded.The FWFM of the thermally broadenedresonance is given in unit of c by D¼a x F L C P c f=2p k x[20].For a sufficiently strongthermal load(D)1)the fringe shape presentsan hysteresis behavior,the saddle-node point cor-responding to the top of the fringe.For P c=3.25W,onefinds D T0=0.14°C,andf th=65mm.Such a short focal length means thatsignificant FF lensing still occurs despite the looserfocusing used and the small value of FF absorp-tion coefficient.Only a rigorous hot ring cavitywaist analysis,similar to the one developed for astanding-wave symmetric resonator in[20],cangive an indication on the influence of this thermallens on the cold cavity waist.For a symmetric res-onator,the length must be reduced to the mini-mum allowed space in order to contain thethermal lens effect.Due to D T0,the FF hot fringeis shifted from the cold fringe position by D.0.47half-linewidth only,given F$30.To evaluate the heating due to the SH absorbedpower,Eqs.(11),(12)cannot be used as they are,because the blue power is not uniform along thecrystal(P2x=0at z=0and is maximum atz=L C).It is however possible to estimate the totalblue absorbed power P abs2x¼C abs P2cfrom Eq.(8),which yields P abs2x¼25mW,and C abs=2.4·10À3WÀ1.This heating power can be distributeduniformly if we define an effective absorption R.Le Targat et al./Optics Communications247(2005)471–481479。

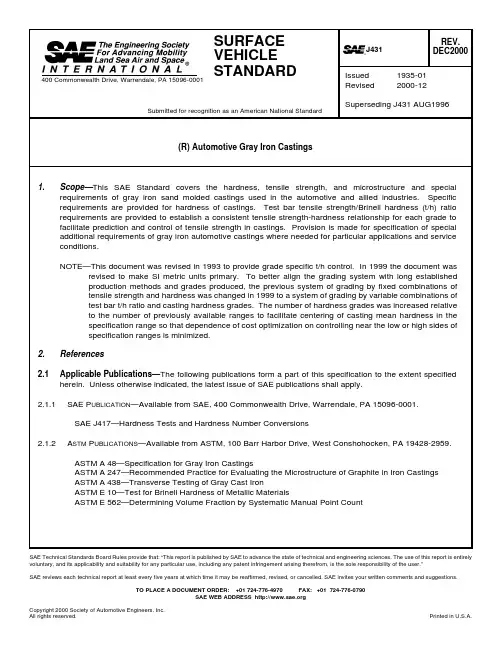

SAE Technical Standards Board Rules provide that: “This report is published by SAE to advance the state of technical and engineering sciences. The use of this report is entirely voluntary, and its applicability and suitability for any particular use, including any patent infringement arising therefrom, is the sole responsibility of the user.”SAE reviews each technical report at least every five years at which time it may be reaffirmed, revised, or cancelled. SAE invites your written comments and suggestions.TO PLACE A DOCUMENT ORDER: +01 724-776-4970 FAX: +01 724-776-0790SAE WEB ADDRESS Copyright 2000 Society of Automotive Engineers, Inc.2.2Related Publications—The following publications are provided for information purposes only and are not arequired part of this document. Additional information concerning gray iron castings, their properties, and use can be obtained from:1.Metals Handbook, Vol. 1, 10th Edition, ASM International, Materials Park, OH2.Cast Metals Handbook, American Foundrymen's Society, Des Plaines, IL3.1981 Iron Castings Handbook, Iron Castings Society, Inc., Cleveland, OH4.H.D. Angus, “Physical and Engineering Properties of Cast Iron,” British Cast Iron ResearchAssociation, Birmingham, England, 2nd Edition, 19765.“Gray, Ductile, and Malleable Iron Castings Current Capabilities,” STP-455, American Society forTesting and Materials, 100 Barr Harbor Drive, West Conshohocken, PA 19428-29596.G.N.J. Gilbert, “Engineering Data on Grey Cast Iron,” BCIRA (1977), Alvechurch, Birmingham,England7.“Tables for Normal Tolerance Limits, Sampling Plans and Screening,” R.E. Odeh and D.B. Owen,Marcel Dekker, Inc., New York and Basel, 19808.“Fatigue Properties of Gray Cast Iron,” L.E. Tucker and D.R. Olberts, SAE Paper 6904713.Grade definition and Designation.3.1Iron Grade—Gray iron grades, defined by their minimum test bar t/h ratio, are designated by the letter Gfollowed by a number equaling the defining minimum test bar t/h ratio multiplied by 100. The units used for this purpose are MPa for both tensile strength and hardness. The t/h ratio is dimensionless.EXAMPLE—G10 designates a gray iron having minimum test bar t/h = 0.100.3.2Hardness Grade—Hardness grades, defined by minimum hardness exhibited in castings, are designated bythe letter H followed by a number equaling the minimum casting hardness divided by 100. The casting hardness unit used for this purpose is the MPa.EXAMPLE—H18 designates minimum casting hardness of 1800 MPa.3.3Casting Grade—SAE gray iron casting grades are defined and designated by combining the iron grade andthe hardness grade designations.EXAMPLE—G10H18 designates iron in castings with minimum test bar t/h of 0.100 MPa/MPa and minimum casting hardness of 1800 MPa.3.4Special Requirements—Special requirements, defined for special applications, are designated by alowercase suffix letter placed at the end of the casting grade designation.EXAMPLE—11H20b designates iron meeting special requirements of special service brakedrums.3.5Equivalency and Conversion—Equivalency information for engineering purposes, between this and otherstandards, is provided in A.4.1, A.4.6, and A.4.7. Grades of this document can have multiple equivalents with grades of previous SAE and most other standards as exemplified by grades G3000 and G4000. Determination of current grade equivalent for castings established in production under previous SAE or other documents, shall be by the producer, in accordance with 5.5.3, based on historical or current test data from the established process, and reported to and approved by the purchaser. When the producer does not have access to the applicable historical data, grade determination shall be based on samples provided by producer and approved by purchaser.4.Grades 4.1Iron Grades—Iron grades and their t/h lower limit requirements are shown in Table 1.4.2Hardness Grades—Hardness grades and their required lower hardness limits are shown in Table 2.4.3Special Requirements—Special additional requirements for particular applications and service conditionsand their lower case letter designators are shown in Table 3. Special additional requirements shall not change test bar t/h ratio or casting hardness requirements.TABLE 1—IRON GRADESGrade Test Bar t/hRatio Lower Limit (1)MPa/MPa (2)1.Statistically defined2.Both tensile and hardness in MPA unitsTest Bar t/h Ratio Lower Limit(1)psi/HB (3)(4)3.For reference only. The MPa/MPa SI metric values are primary.See Section 1.4.Units of HB are kgf per mm 2.G70.070100G90.090128G100.100142G110.110156G120.120171G130.130185TABLE 2—HARDNESS GRADESGrade Casting HardnessLower Limit (1)MPa (2)1.Statistically Defined.2.Hardness in MPa = HB multiplied by 9.80665.Casting Hardness Lower Limit (1)HB (3)3.Units of HB are kgf per mm 2.H101000102H111100112H121200122H131300133H141400143H151500153H161600163H171700173H181800184H191900194H202000204H212100214H222200224H232300235H2424002454.4Casting Grades—Combination of iron grade, hardness grade, and special requirement designation, if any,defines casting grade. A partial list of casting grades in common production and use, identified as reference grades and considered standard, is given in Table 4 with current and previous SAE designations. Other combinations of iron grade and hardness grade which are established in production and use or become so in the course of application development, or in accordance with 3.5 and 5.5.3, are also considered standard.NOTE—For castings successfully established in production and use under previous designations, the currentSAE casting grade shall be determined by the producer and approved by the purchaser (see 3.5).TABLE 3—SPECIAL REQUIREMENTSDesignatorApplication Requirements aBrake Drums and Discs and Clutch Plates for Special Service 1. Total Carbon 3.4% minimum.2. Microstructure: Lamellar Pearlite. Ferrite < 15%(1)1.See ASTM E 562.bBrake Drums and Discs and Clutch Plates for Special Service 1. Total Carbon 3.4% minimum.2. Microstructure: Lamellar Pearlite. Ferrite or carbide < 5%(1)cBrake Drums and Discs and Clutch Plates for Special Service 1. Total Carbon 3.5% minimum.2. Microstructure: Lamellar Pearlite. Ferrite or carbide < 5%(1)d Alloy Hardenable Gray IronAutomotive Camshafts (2)2.As-cast requirements. Camshafts may be flame or induction hardened to specified hardness and depth on cam sur-faces.1. Chromium shall be 0.85 to 1.50%(3)2. Molybdenum shall be 0.40 to 0.60%(3)3. Microstructure of cam nose:Extending to 45 degrees on both sides of cam nose centerlineand to minimum depth of 3.2 mm from the surface shall consistof primary carbide (cellular and/or acicular) and graphite in amatrix of fine pearlite.4. The amount of carbide in the cams and method of checking shallbe specified by the purchaser.5. Casting Hardness check location shall be on a bearing surface.3.Ranges for specific castings shall be within the ranges shown.TABLE 4—REFERENCE GRADES (1)1.Established in production and use and having near equivalents with previousSAE designations.SAE Casting GradePrevious SAE Designation (2)2.Equivalency based on tensile strength in 30 mm diameter test bars. SeeTable A4.G9H12G1800G9H17G2500G10H18G3000G11H18G3000G11H20G3500G12H21G4000G13H19G4000G7H16 cG1800 h (3)3.The h suffix was previously used to designate both t/h and carbon require-ments for this grade.G9H17 aG2500 a G10H21 cG3500 c G11H20 bG3500 b G11H24 d G4000 d5.Tensile Strength to Hardness Ratio, Hardness, and Casting Tensile Strength5.1Tensile strength values for the t/h ratio determination shall be obtained as shown in Figure 1 from separatelycast 30 mm test bars (type “B”) in accordance with ASTM A 48 except sampling frequency shall be as needed for statistical analysis to determine conformance of t/h ratio with requirements of this document. Test specimens shall be at room temperature, defined as between 10 and 35 °C, during tensile testing.FIGURE 1—TEST BAR HARDNESS LONGITUDINAL TEST ZONEIN RELATION TO TENSILE SPECIMEN5.2Test bar hardness for the t/h ratio determination shall be taken on the tensile test bar between bar center andmidpoint of the as-cast radius, and between 50 and 75 mm from the as-cast bar end as shown in Figures 1 and 2.FIGURE 2—TEST BAR HARDNESS RADIAL TEST ZONE5.3Brinell Hardness is considered standard for test bars and production castings and shall be determinedaccording to ASTM E 10 after sufficient material has been removed from the casting surface to insure representative hardness readings. The 10 mm ball and 3000 kgf load shall be used unless physically precluded by specimen dimensions as given in ASTM E 10. Test specimens shall be at room temperature, defined as between 10 and 35 °C, during hardness testing.5.3.1When a hardness test other than the Brinell test with 10 mm ball and 3000 kgf load must be used, conversionto the 3000 kgf 10 mm ball equivalent shall be by applicable conversion table in SAE J417 or by on-site calibration using Standard Brinell Bars.5.4 A non-destructive casting hardness test location on the casting for monitoring conformance to grade limits shallbe established by agreement between purchaser and producer or determined by producer. It should be readily accessible for convenience in performing the test to ensure adequate quantity, consistency, and accuracy of accumulated data for statistical validity in service of general variance control. Targeting of hardness measurement at service function related locations shall not be considered a requirement unless specified in accordance with 5.4.1.5.4.1In special cases, casting hardness at particular casting locations considered critical by the designer butdifficult to access or requiring casting destruction may be specified by the purchaser with producer agreement. In such cases, hardness grade conformance may be established directly by hardness readings so obtained or indirectly by hardness readings at an accessible location using an agreed method of correlation.5.5The foundry shall exercise the necessary controls and inspection techniques to ensure compliance with thespecified hardness and t/h ratio minimums. When samples exhibit normal variance patterns, conformance with grade requirements for t/h and casting hardness shall be determined by long term analysis of production samples using Normal Curve statistical methods. For sample sizes less than 30, the lower limit shall be taken as 3 standard deviations below the mean. For sample sizes larger than 30, the lower limits for t/h and casting hardness control may be optionally taken as the lower 3 standard deviation limit or the lower 99% population limit of the one-sided normal distribution at 95% confidence calculated by the confidence interval method (seeA.1.5).5.5.1Test bar samples to confirm test bar t/h ratio conformance shall be random samples. Frequency of samplingmay be specified by purchaser or determined by producer. Minimum frequency per grade shall be 1 per 8 h shift. Sample period may be any time interval or accumulation of time intervals in which the targeted mean t/h of producer’s process control specifications is unchanged.5.5.2Casting samples to confirm casting hardness conformance shall be random samples. Frequency ofsampling may be specified by purchaser or determined by producer. Minimum frequency shall be the least of 5 per 8 h shift or 100% of production. Sample period may be any time interval or accumulation of time intervals during which the targeted mean casting hardness of producer’s process control specifications is unchanged.5.5.3Parts successfully established in production and use under previous SAE or other Standards shall bereclassified under this document, without change in mean test bar t/h or mean casting hardness, by appropriate selection of iron grade from Table 1, casting hardness grade from Table 2, and casting hardness range under 5.6.5.5.4Casting t/h data obtained by casting hardness tests as described in 5.4 or 5.4.1 and casting tensile tests asdescribed in 5.7, shall be considered informational only and shall not be used for grade conformance assessment.5.5.5When casting hardness and/or test bar t/h variance patterns have too much skewness or otherwise do notsupport Normal Curve methods of analysis, an alternate method shall be established by agreement of purchaser and producer which achieves population limit control equivalent to that described in 5.5.5.6Casting hardness range may be specified by the purchaser to provide a non-statistical upper limit formachinability control. The standard range shall be 600 MPa or 60 HB, taken above the required grade minimum, and this shall be the assumed range when not specified. Purchasers shall not specify narrower ranges than this without prior agreement of the producer. Producers shall not exceed this range without prior agreement of the purchaser.5.7 A minimum value for tensile strength determined by destructive testing at specified locations in castings maybe specified as an additional, part number specific, conformance requirement by agreement between purchaser and producer on the applicable lower limit and statistical definition, sampling rate,and any special testing methods required. The agreed minimum shall be obtained with a standard grade as defined in this document. Information for estimating and experimentally determining the tensile minimum which can be expected for a given grade at specific locations in castings for purposes of design and development is given in Section A.4.5.8 A statistical lower limit for tensile/hardness ratio determined by destructive testing at specified locations incastings may be specified as an additional, part number specific, conformance requirement by agreement between purchaser and producer on the applicable lower limit and statistical definition, sampling rate, and any special testing methods required. The agreed minimum shall be obtained with a standard grade as defined in this document. Information for estimating and experimentally determining the tensile/hardness ratio minimum which can be expected for a given grade at specific locations in castings for purposes of design and development is given in Section A.4.6.Heat Treatment6.1Castings of hardness grades H10 through H17 may be annealed to meet hardness requirements. Castings ofgrades H21 through H24 may be quenched and tempered to meet hardness requirements.6.2Appropriate heat treatment for removal of residual stresses, or to improve machinability or wear resistance,may be specified. Heat treated castings must meet hardness requirements of the grade.7.Microstructure7.1Unless otherwise specified, gray iron covered by this document shall be substantially free of primary cementiteand/or massive steadite and shall consist of flake graphite in a matrix of ferrite or pearlite or mixtures thereof.7.2Unless otherwise specified, the graphite structure shall be primarily type A in accordance with ASTM A 247.8.Castings for Special Applications with Controlled Composition and Microstructure8.1Heavy-Duty Brake Drums and Clutch Plates8.1.1These castings are considered as special cases and are covered in Tables 3 and 4.8.2Alloy Iron Automotive Camshafts8.2.1These castings are considered as special cases and are covered in Table 3 and 4.9.General Requirements9.1Castings furnished to this document shall be representative of good foundry practice and shall conform todimensions and tolerances specified on the casting drawing.9.2Approval by purchaser of location on the casting and method to be used is required for any casting repair.9.3Additional casting requirements such as vendor identification, other casting information, and special testingmay be agreed upon by purchaser and supplier. These should appear as product specifications on the casting or part drawing.10.Notes10.1Marginal Indicia—The change bar (l) located in the left margin is for the convenience of the user in locatingareas where technical revisions have been made to the previous issue of the report. An (R) symbol to the left of the document title indicates a complete revision of the report.PREPARED BY THE SAE IRON AND STEEL TECHNICAL COMMITTEE DIVISION 9—AUTOMOTIVE IRON AND STEEL CASTINGS OF THESAE IRON AND STEEL TECHNICAL EXECUTIVE COMMITTEEAPPENDIX ANOTE—Information in the Appendix is for reference only and does not constitute requirements.A.1Definition and Control of Gray IronA.1.1Gray iron is a cast iron in which the graphite is present in flake form instead of nodules or spheroids as inmalleable or ductile iron. Because its graphite has this flake structure, gray iron exhibits much greater sensitivity of mechanical properties to carbon content than malleable or ductile. As in malleable and ductile, the metallic matrix in which the graphite of gray iron resides is normally either eutectoid or hypo-eutectoid silicon steel with a working range of hardness of about 150 to 600 HB (1.5 to 6 GPa). In special cases, the matrix may be martensitic or hyper-eutectoidal with working hardness up to about 800 HB (8 GPa)A.1.2Gray iron naturally divides into a family or series of grades having different tensile strength to hardness (t/h)ratios uniformly regulated by eutectic graphite content up to the eutectic composition as shown in Figure A1 with carbon equivalent(CE) as the graphite parameter. Decline in t/h ratio continues as CE increases above the eutectic, but at a much smaller and less predictable rate. Constant t/h lines of this figure are essentially lines of constant graphite effect on mechanical properties. Properties sensitive to both graphite and matrix, such as bulk tensile strength and bulk hardness, vary in constant proportionality to each other and to their matrix counterparts—matrix tensile strength and matrix hardness—along constant t/h lines. Elastic modulus and damping capacity vary mainly only with graphite and are therefore highly constant along the constant t/h lines. Since these lines are also lines of constant eutectic graphite and CE, the most important castability parameters, they are logical grade lines for foundry control as well as for mechanical property control.FIGURE A1—CHARACTERISTIC t/h RATIOS OF GRAY IRONSA.1.3Specification control of gray iron, since it is a composite material, requires joint classification by at least twoproperty parameters of which one should be mainly graphite microstructure related and the other mainly a function of the matrix microstructure. Limited effectiveness of control by a single bulk property is illustrated in Figures A2 and A3. Figure A2 exemplifies grading by tensile strength alone—any given grade so defined is seen to traverse a wide range of possible hardness minimums. Likewise, in Figure A3, hardness is used as a single defining property and a wide range of possibilities exists for the tensile minimum. In both cases, t/h ratio and therefore, elastic modulus, damping capacity and castability are undefined. Figure A4 illustrates improved control obtainable by jointly specifying two property parameters. In this example, t/h ratio and hardness are the joint control parameters. A tensile minimum is now defined and, in general, all properties including castability are effectively controlled.FIGURE A2—GRADING BY TENSILEFIGURE A3—GRADING BY HARDNESSFIGURE A4—GRADING BY t/h RATIO AND HARDNESSA.1.4The control parameters used to classify gray iron in this document are test bar t/h ratio and casting hardness,selected because they meet the criteria cited in A.1.3 and are well established, widely used tests. The t/h ratio in this document is dimensionless, reflecting long established practice in the metric countries, where identical units have historically been used for both tensile strength and hardness. Hardness units will be in kg/mm 2when reported as HB and are multiplied by g = 9.80665 to convert to MPa and form the dimensionless ratio with tensile strength in MPa units. For a number of purposes, it is useful to know the matrix hardness.Examples of its use are -- process control of the hardness property, simplification of bivariate statistical analysis of hardness and tensile strength, and engineering selection of iron grade for best wear resistance or fatigue life in strain limited loading. The matrix hardness can be estimated with sufficient accuracy for most purposes from the bulk hardness and t/h ratio with the relation:(Eq. A1)in which k is a graphite structure related constant with a usual range in sand cast gray iron of 0.60 to 0.65.A.1.5With continuous production processes used for automotive casting production conformance to specificationcontrol limits can be assessed by analysis of periodic samples using the Confidence Interval method. This method predicts population limits of parent production in standard deviation units, at various confidence levels,as multiples of the sample standard deviation measured from the sample mean. Tabulations of such multipliers versus sample size are widely published (one of many possible references is given in 2.2). The curve of Figure A5 is a plot of such a tabulation showing how the multiplier typically varies with sample size.The curve of Figure A5 is drawn for 99% population limits of a one-sided normal distribution at 95%confidence. For a sample size of about 300 bars, the –2.5 sigma limit of the sample would be the 99%population limit for the parent production.FIGURE A5—CONFIDENCE INTERVAL TOLERANCING MULTIPLIERS (NUMBER OF SIGMAS)H matrix H bulk 1k ∗1t h ratio 0.35⁄⁄–()–[]⁄=A.2Chemical CompositionA.2.1Typical base composition ranges generally employed for the iron grades are shown in Table A1. The basecomposition does not include alloys such as Cu, Cr, Mo, Ni, or others which may be added for hardness or t/h control, or to meet mandatory composition limits of special irons given in Table 3 of the main body of this document.A.2.2Typical base composition ranges may vary for specific grades depending on casting section size ormetallurgical factors such as trace element content, or to satisfy mandatory composition requirements of special irons as given in Table 3. A.2.3Typical composition ranges including typical alloy content for camshaft iron, grade G11H24d, are shown inTable A2.A.3MicrostructureA.3.1The as-cast microstructure of gray iron covered by this document consists of a mixture of flake graphite in amatrix consisting of ferrite, ferrite and pearlite, or pearlite, as described in Table A3. The quantity of flake graphite and size of the flakes vary with iron grade. The amount and fineness of pearlite vary with the hardness grade. The pearlite is usually lamellar but may be partially spheroidal in slowly cooled sections or where heat treatment has been applied.TABLE A1—TYPICAL BASE COMPOSITIONSIron Grade Previous Designation Carbon Silicon Manganese Sulfur Max.PhosphorusmaxC. E.(1)(Approx.)1. C. E. (Carbon Equivalent) = %C + (1/3) %Si.G7G1800h 3.50 - 3.70 2.30 - 2.800.60 - 0.900.140.25 4.35 - 4.55G9G2500 3.40 - 3.65 2.10 - 2.500.60 - 0.900.120.25 4.15 - 4.40G10G3000 3.35 - 3.60 1.90 - 2.300.60 - 0.900.120.20 4.05 - 4.30G11G3000 3.30 - 3.55 1.90 - 2.200.60 - 0.900.120.10 4.00 - 4.25G12G3500 3.25 - 3.50 1.90 - 2.200.60 - 0.900.120.10 3.95 - 4.20G13G40003.15 - 3.401.80 -2.100.70 - 1.000.120.083.80 -4.05TABLE A2—TYPICAL CHEMICAL COMPOSITION OF ALLOY GRAY IRONAUTOMOTIVE CAMSHAFTS, GRADE G11H24d (PREVIOUS 4000d)ConstituentWt %Total Carbon 3.10 to 3.60Silicon 1.95 to 2.40Manganese 0.60 to 0.90Phosphorus 0.10 max Sulfur 0.15 max Chromium 0.85 to 1.50Molybdenum 0.40 to 0.60Nickel 0.20 to 0.45CopperResidualA.3.2The size and distribution of graphite flakes in gray iron depend upon chemistry, liquid metal treatment(inoculation), and cooling rate during solidification. The primary, but not sole, chemical determinant is carbon equivalent, defined as C+Si/3. A.3.2.1Alloying elements used for pearlite hardness control have small but non-negligible effects on graphite size.Since some elements operate as coarsening and others as refining agents, combinations can be used for a neutral effect. A.3.2.2When alloying elements are used to produce a mixed structure of primary carbide and graphite, as in thecams of alloy hardenable gray iron automotive camshafts, eutectic graphite is reduced and significant flake refinement results. A.3.2.3The graphite microstructure of gray iron cannot be changed by heat treatment.A.3.3Hardness of the ferrite in the gray iron matrix is unaffected by cooling rate but is affected by alloy elements insolid solution, the most noticeable being silicon, which increases ferrite hardness about 35 HB for each 1% of Silicon present. Heat treatment is required to decompose all pearlite and produce a fully ferritic structure.A.3.4The amount and hardness of pearlite depend jointly on cooling rate and alloy chemistry, which are balanced inthe foundry to control pearlite amount and hardness and, consequentially, casting hardness. Both the amount and hardness of pearlite can be altered by heat treatment. A.3.5In special cases such as alloy hardenable iron camshafts, alloy is also used to obtain controlled percentages ofcarbides, detracting from graphite, in cam and valve lifter surfaces where maximum contact stress occurs. The as-cast matrix structure in these cases is pearlite; in the contact surfaces, the matrix is transformed to tempered martensite by surface heat treatment.A.3.6Gray iron castings can be through-hardened by liquid quenching or selectively surface-hardened by eitherflame or induction methods.TABLE A3—TYPICAL MICROSTRUCTURES OF REFERENCE GRADESSAE Casting Grade Previous Designation Microstructure Graphite(1)1.See ASTM A 247.MicrostructureMatrix G9H12G1800Type VII A & B Ferritic - Pearlitic G9H17G2500Type VII A & B Pearlitic - FerriticG10H18G3000Type VII A Pearlitic G11H18G3000Type VII A Pearlitic G11H20G3500Type VII A Pearlitic G12H21G4000Type VII A Pearlitic G13H19G4000Type VII APearlitic G7H16 c G1800 h Type VII A, B, & C size 1-3Lamellar Pearlite G9H17 a G2500 a Type VII A size 2-4Lamellar Pearlite G10H21 c G3500 c Type VII A size 3-5Lamellar Pearlite G11H20 b G3500 b Type VII A size 3-5Lamellar Pearlite G11H24 dG4000 dType VII A & E size 4-7(1)Pearlitic - Carbidic (2)2.In cam nose. As cast. matrix pearlite in cam may be transformed to tempered Martensite bysubsequent Flame or induction hardening.A.4Mechanical Properties of Castings For DesignA.4.1The calculated tensile strength minima shown in Table A4 for 30 mm diameter test bars assume Normal Curvestatistics with foundry industry typical variance levels and are in good agreement with typical production data.Values are also given in the table for a quantity called the Casting Strength Index which is defined as the multiple of the statistical grade minima of test bar t/h ratio and casting hardness. Since the iron grade number equals the t/h ratio times 100 and the hardness grade number equals the hardness (in MPa) divided by 100,the casting strength index also equals the product of iron grade number times hardness grade number and is also in MPa. Casting hardness is specified as a direct measure on the casting and controlled in common foundry practice by ladle alloy additions as needed to offset section size effects. The t/h ratio in castings is subject to section sensitivity but in a given section has a parallel relationship with t/h ratio in the test bar. For these reasons, with uniform statistical definition, the Casting Strength Index defined as the product of the statistical minima of casting hardness and test bar t/h is a valid relative measure of casting strength for design purposes. When section sensitivity of the t/h ratio is quantitatively known, this index can also be used to make a first working estimate of the absolute value of casting tensile strength. Both test bar tensile strength and Casting Strength Index values can be used to determine tensile equivalency with iron graded by other specifications and to optimize SAE grade choice. A.4.1.1Method of defining Casting Strength Index as minimum casting hardness multiplied by minimum test bar t/hand its relationship to the statistical limits of tensile strength and hardness are shown graphically in Figure A6.TABLE A4—TENSILE STRENGTH CHARACTERISTICS AND TENSILE EQUIVALENTS OFSAE REFERENCE GRADES (1)1.Established in production and use and having near equivalents in previous SAE standards and test bar tensile strength equivalents inother standards.SAECasting Grades Former SAE Grades (2)2.Former SAE grades having near equivalence with t/h and hardness requirements, and theoretical test bar tensile strength minimums ofthe current SAE casting grades.Non-SAE Tensile Grades (3)SI 3.Grades of standards based solely on test bar tensile strength such as ASTM A 48 and 48 M, ISO 185, EN 1561, and others, having nearequivalence with theoretical test bar tensile strength minimums of the current SAE casting grades.Non-SAE Tensile Grades (3)Inch-lbTheoretical Tensile Strength Minimums of SAE CastingGradesCasting StrengthIndex(4)MPa4.Multiple of test bar t/h ratio and casting hardness minimum of the current SAE casting grade. Numerically equal to multiple of iron num-ber multiplied by casting hardness grade number.Theoretical Tensile Strength Minimums of SAE Casting Grades Casting Strength Index (4)ksi Theoretical Tensile Strength Minimums of SAE Casting Grades 30 mm Dia.Test Bars (5)MPa 5.99% population lower limit of SAE casting grade at 95% confidence, one-sided normal distribution, 300 bar sample (–2.5 σ). Hardnessand t/h minimums at –3 σ, hardness range 500 MPa, t/h range 0.35 for iron grades 7 to 11 and 0.30 for iron grades 12 to 13.Theoretical Tensile Strength Minimumsof SAE Casting Grades 30 mm Dia.Test Bars (5)ksi G9H12G180010815.712418.0G9H17G25001752515322.217024.6G10H18G30002003018026.119828.7G11H18G30002253019828.721731.5G11H20G35002503522031.923934.7G12H21G40002754025236.527239.4G13H19G40002754024735.826838.9G7H16 c G1800 h (6)6.The h suffix was previously used to designate both t/h and carbon requirements of this grade.11216.212718.4G9H17 a G2500 a 1752515322.217024.6G10H21 c G3500 c 2253521030.522833.1G11H20 b G3500 b 2503522031.923934.7G11H24 dG4000 d2754026438.328441.2。

IntroductionSteel, an alloy primarily composed of iron and carbon, has been the backbone of modern civilization for centuries, playing a pivotal role in infrastructure development, transportation, energy production, and countless other industrial applications. However, not all steel is created equal. This essay delves into the multifaceted nature of premium steel, examining the stringent standards it must meet to be classified as such and the various attributes that distinguish it from its lower-grade counterparts. It is through these rigorous criteria and exceptional qualities that premium steel emerges as the material of choice for projects demanding utmost reliability, durability, and performance.1. Material Composition and MicrostructureAt the core of premium steel's superior characteristics lies its meticulously controlled composition and microstructure. The precise balance of elements, including carbon, manganese, silicon, chromium, nickel, molybdenum, and others, is crucial for achieving desired mechanical properties, corrosion resistance, and weldability. While carbon content is fundamental for hardness and strength, alloying elements serve specific purposes: chromium enhances corrosion resistance, nickel improves toughness and ductility, and molybdenum increases strength at high temperatures.Moreover, the microstructure of premium steel, which refers to the arrangement of its constituent phases (e.g., ferrite, pearlite, martensite, and austenite), is carefully tailored through controlled heating and cooling processes, such as annealing, quenching, and tempering. These treatments influence the grain size, phase distribution, and dislocation density within the steel, ultimately dictating its mechanical behavior, toughness, and fatigue resistance. A well-designed microstructure ensures that premium steel exhibits an optimal combination of strength, ductility, and toughness, enabling it to withstand diverse service conditions without failure.2. Mechanical Properties and PerformancePremium steel is characterized by exceptional mechanical properties thatsurpass those of standard grades. Key indicators include:a) Yield Strength: The minimum stress required to initiate permanent deformation in the material. Premium steels often exhibit yield strengths well above 500 MPa, providing robust structural integrity and minimizing deformation under load.b) Tensile Strength: The maximum stress the material can withstand before fracturing. High tensile strengths (exceeding 800 MPa or more in some cases) enable premium steel to endure heavy loads and dynamic stresses without failure.c) Ductility and Toughness: The ability of the material to deform plastically without fracturing and to absorb energy during impact or deformation. Enhanced ductility and toughness in premium steels reduce the risk of brittle fractures and ensure better performance under cyclic loading and in low-temperature environments.d) Fatigue Resistance: The capacity to withstand repeated or cyclic loads without cracking or fracturing. Premium steels, with their optimized microstructures and low residual stresses, demonstrate excellent fatigue resistance, ensuring long-term reliability in applications subjected to fluctuating stresses.3. Corrosion and Wear ResistanceIncorporating corrosion-resistant alloys like stainless steel or applying protective coatings, premium steels offer enhanced resistance to oxidation, pitting, crevice corrosion, and stress corrosion cracking. This attribute is particularly critical in harsh environments, such as marine, chemical processing, or food processing industries, where prolonged exposure to aggressive chemicals or moisture can rapidly degrade lower-grade materials.Additionally, premium steels may incorporate hardening elements or surface treatments to increase their wear resistance, making them suitable for applications involving friction, abrasion, or erosion, such as in mining equipment, cutting tools, or heavy machinery components.4. Weldability and FabricationPremium steels are designed with weldability in mind, ensuring that they can be joined using various welding techniques while maintaining their inherent mechanical properties and corrosion resistance. This is achieved through careful control of alloying elements, carbon equivalent, and microstructure. Good weldability reduces the risk of defects, such as cracks, porosity, or lack of fusion, during fabrication and contributes to the overall structural integrity of welded assemblies.5. Certifications, Standards, and TestingFor a steel product to be deemed 'premium,' it must adhere to stringent international or industry-specific standards, such as those set by ASTM, AISI, EN, DIN, JIS, or API. These standards encompass material composition, mechanical properties, fabrication processes, non-destructive testing (NDT), and quality control measures. Compliance with these standards provides assurance to end-users that the steel will perform as intended in its designated application.Furthermore, premium steel manufacturers typically subject their products to rigorous testing, including chemical analysis, mechanical property tests (tensile, impact, hardness), corrosion tests (salt spray, cyclic polarization), non-destructive examinations (ultrasonic testing, magnetic particle inspection), and sometimes even full-scale prototype testing. Such comprehensive evaluations verify that the steel meets or exceeds the specified requirements and performs reliably under real-world conditions.ConclusionPremium steel represents the epitome of metallurgical expertise, combining meticulously controlled composition, optimized microstructure, exceptional mechanical properties, enhanced corrosion and wear resistance, and excellent weldability. By adhering to stringent international and industry-specific standards and undergoing rigorous testing, premium steel guarantees unparalleled performance, durability, and reliability in even the most demanding applications. As industries continue to push the boundaries of innovation and efficiency, premium steel remains a cornerstone material, offering designersand engineers a trusted solution for realizing their visions while ensuring safety, longevity, and sustainability.。